About

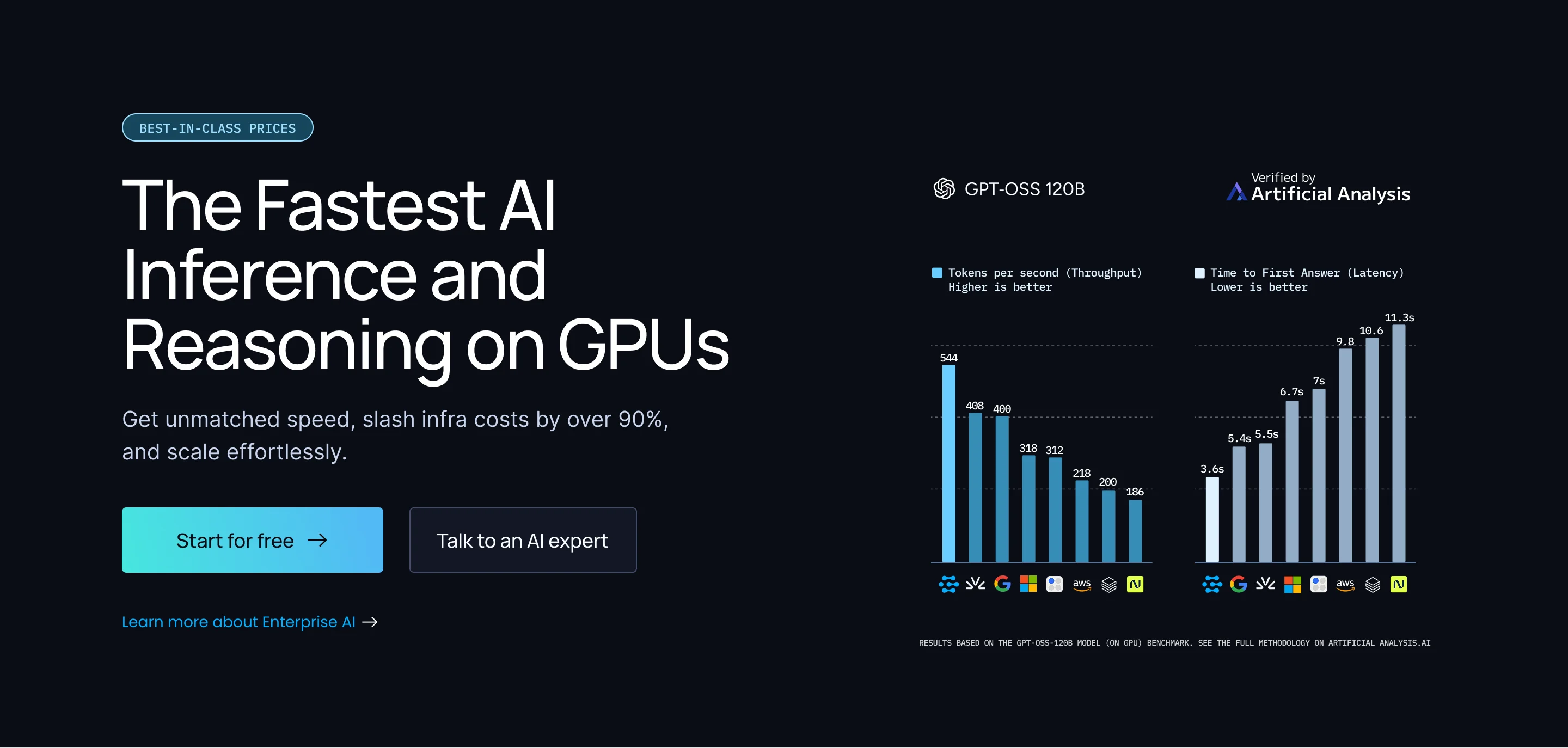

Clarifai is an enterprise-grade AI platform built for speed, scalability, and cost efficiency. At its core is a high-performance GPU inference engine independently benchmarked to deliver 544 tokens/sec with a 3.6-second time-to-first-answer at just $0.16 per million tokens — placing it in the most competitive quadrant of speed and price. The platform is fully OpenAI API-compatible, meaning developers can redirect existing applications to Clarifai with minimal configuration changes and immediately benefit from faster responses and lower spend. Beyond inference, Clarifai provides a complete suite of AI development tools including automated data labeling, model training, retrieval-augmented generation (RAG) pipelines, AI workflow orchestration, and a unified Control Center for governance. The newly launched AI Runners feature securely bridges local AI models, MCP servers, and agents to the cloud via a robust API. Clarifai supports a wide range of use cases including computer vision, content moderation, digital asset management, and generative AI applications. More than 30 brands use the Clarifai API for large-scale AI workloads. Whether you are a solo developer exploring AI models or an enterprise operationalizing production-grade AI, Clarifai's unified platform reduces complexity and infrastructure costs while accelerating time to value.

Key Features

- Blazing-Fast GPU Inference: Independently benchmarked at 544 tokens/sec with 3.6s TTFA and $0.16/M tokens — among the fastest and most affordable GPU inference providers available.

- OpenAI-Compatible API: Drop-in replacement for OpenAI endpoints — redirect existing apps to Clarifai with a single base URL change, no SDK rewrites or new integrations required.

- AI Runners (Local + Edge): Securely connect local AI models, MCP servers, and agents to the cloud via a robust API, bridging on-premise infrastructure with scalable cloud compute.

- End-to-End AI Development Suite: Covers the full AI lifecycle: automated data labeling, model training, RAG pipelines, AI workflow orchestration, and governance controls in a unified platform.

- Compute Orchestration: Manage and scale AI workloads across any compute infrastructure with centralized control, cost visibility, and elastic scaling.

Use Cases

- Enterprises replacing expensive OpenAI API usage with a faster, cheaper, fully compatible alternative to reduce inference costs by over 90%.

- AI teams building RAG-powered applications needing scalable, low-latency model inference combined with data management and workflow orchestration.

- Computer vision projects requiring custom model training, automated data labeling, and production deployment on managed GPU infrastructure.

- Developers connecting local AI models or MCP servers to cloud-hosted applications using AI Runners for hybrid deployment architectures.

- Government and enterprise organizations operationalizing AI at scale with governance controls, audit trails, and centralized compute management.

Pros

- Exceptional Inference Speed and Price: Independently validated benchmarks place Clarifai in the most attractive speed-vs-price quadrant, making it highly competitive against major GPU cloud providers.

- Zero Migration Friction: Full OpenAI API compatibility means teams can switch to Clarifai and start saving without touching their existing codebase or learning new SDKs.

- Unified Platform: Data labeling, model training, inference, RAG, workflows, and governance are all available in one place, reducing tool sprawl and integration overhead.

Cons

- Complexity for Beginners: The breadth of features — from compute orchestration to AI Lakes — can be overwhelming for individual developers or small teams with simple use cases.

- Pricing Transparency: Full pricing details for enterprise tiers and advanced compute orchestration features are not prominently listed and may require contacting sales.

Frequently Asked Questions

Yes. Clarifai's Compute Orchestration is fully OpenAI-compatible. You only need to change the base URL and API key in your existing application — no code rewrites or new SDKs are needed.

Clarifai's hosted GPT-OSS-120B model was independently benchmarked by Artificial Analysis at 544 tokens/sec with a 3.6-second time-to-first-answer at $0.16 per million tokens, ranking it among the top GPU inference providers on the speed-to-cost ratio.

AI Runners is a Clarifai feature that securely bridges your local AI models, MCP servers, and agents to the cloud via a robust API, enabling hybrid deployments without exposing local infrastructure directly.

Yes. Clarifai has deep roots in computer vision and supports visual inspection, content moderation, digital asset management, and custom computer vision model training and deployment.

Yes. Clarifai offers a free tier that lets developers explore the platform, run inference, and access community models before committing to a paid plan.