About

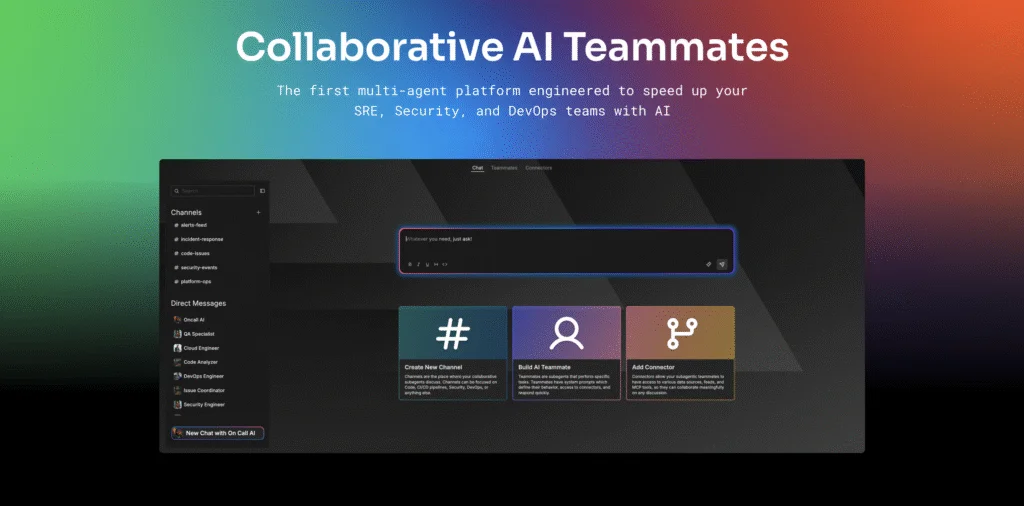

Edge Delta is a next-generation observability platform built for the AI era, offering a suite of Collaborative AI Teammates that autonomously monitor, investigate, and respond to production incidents. Rather than replacing human engineers, Edge Delta's configurable AI agents—including SRE, Security Engineer, Code Analyzer, Issue Coordinator, and Anomaly Detection agents—filter noise, correlate signals, and deliver full incident context before human experts even engage. The platform integrates with a broad ecosystem of tools and data sources including AWS, GitHub, CircleCI, LaunchDarkly, Databricks, Kubernetes, Slack, Teams, PagerDuty, and more. It supports leading AI models from Anthropic (Claude), OpenAI (Codex/GPT), Google (Gemini), Meta (Llama), Mistral, and Grok, enabling teams to bring their preferred inference stack. Edge Delta's Intelligent Telemetry Pipelines process logs, metrics, and traces in real time, while Security Data Pipelines enforce data privacy, governance, and PII filtering to meet enterprise compliance requirements. Out-of-the-box system prompts for SRE, Security, and DevOps agents mean teams can get started in minutes without complex configuration. Recognized as a Gartner® Cool Vendor™ in Monitoring and Observability and SOC 2 Type II certified, Edge Delta is trusted by organizations like Remitly, Seattle Bank, and PACCAR. It is ideal for DevOps, SRE, and security teams operating at scale who need to reduce manual toil in log analysis, anomaly detection, and incident response.

Key Features

- Collaborative AI Teammates: Pre-built and customizable AI agents for SRE, Security Engineering, Code Analysis, and Issue Coordination that autonomously investigate and respond to incidents.

- Intelligent Telemetry Pipelines: Real-time streaming pipelines that ingest and process logs, metrics, and traces from cloud, Kubernetes, and third-party services.

- Multi-Model AI Support: Supports leading LLMs including Claude (Anthropic), GPT/Codex (OpenAI), Gemini (Google), Grok, Llama, and Mistral for flexible AI inference.

- Security Data Pipelines & Governance: Built-in PII filtering, data privacy controls, and governance guardrails to ensure compliant AI workflows across sensitive telemetry data.

- Broad Ecosystem Integrations: Connects with AWS, GitHub, CircleCI, LaunchDarkly, Databricks, Kubernetes, Slack, Teams, PagerDuty, and community MCP tooling out of the box.

Use Cases

- SRE teams using AI agents to automatically triage production incidents and deliver correlated root cause context before human escalation.

- Security engineering teams leveraging AI-driven anomaly detection and PII filtering to monitor sensitive telemetry data while maintaining compliance.

- DevOps teams automating log analysis and reconciliation processes to shift time from reactive operations to proactive development.

- Platform engineering organizations centralizing observability across distributed Kubernetes and cloud-native environments with intelligent telemetry pipelines.

- Enterprise engineering teams deploying custom AI agents with tailored system prompts to enforce organization-specific investigation and response workflows.

Pros

- Autonomous Incident Investigation: AI agents gather context and correlate signals automatically, so engineers jump in with the full picture rather than scrambling to stitch data together.

- Fast Time to Value: Pre-built agent prompts and connector templates mean teams can deploy and start receiving insights in minutes, not days.

- Enterprise-Grade Security: SOC 2 Type II certified with PII filtering and data governance built into pipelines, making it suitable for regulated industries.

- Model Flexibility: Supports all major AI providers, giving teams freedom to choose or swap inference models without platform lock-in.

Cons

- Primarily Enterprise-Focused: The platform's depth and feature set may be more than small teams or solo developers need, with pricing and complexity skewing toward larger organizations.

- AI Cost Considerations: Using large AI models continuously for telemetry analysis can incur significant LLM inference costs depending on data volume and model selection.

- Learning Curve for Custom Agents: While out-of-the-box agents are straightforward, building and tuning custom AI teammates with specific system prompts requires deeper platform knowledge.

Frequently Asked Questions

AI Teammates are configurable autonomous agents—including SRE, Security Engineer, Code Analyzer, and Issue Coordinator—that monitor production environments, detect anomalies, investigate incidents, and deliver correlated context to human engineers automatically.

Edge Delta supports all major AI providers including Anthropic Claude (Opus, Sonnet, Haiku), OpenAI GPT/Codex, Google Gemini, Grok, Meta Llama, and Mistral, along with legacy versions across providers.

Edge Delta is SOC 2 Type II certified and includes built-in PII filtering, data governance controls, and security data pipelines that let teams restrict what data flows into AI workflows.

Edge Delta integrates with AWS, GitHub, CircleCI, LaunchDarkly, Databricks, Kubernetes, Slack, Microsoft Teams, PagerDuty, and supports A2A and community MCP tooling for extensibility.

Yes, Edge Delta offers a free trial. Teams can get started quickly by connecting their data sources and running pre-built AI agent configurations without a lengthy onboarding process.