About

Hopsworks is a comprehensive AI Lakehouse and MLOps platform designed for teams that need to build, deploy, and scale production machine learning systems. At its core is a Feature Store that provides sub-millisecond feature retrieval powered by RonDB — benchmarked at 10x lower latency than SageMaker and Vertex AI — enabling real-time inference at scale. The platform's AI Lakehouse layer works natively with open table formats including Apache Iceberg, Delta Lake, and Apache Hudi, offering Python-native queries that are 9–45x faster than comparable services like Databricks or SageMaker. No migrations or data conversions are required. Hopsworks also provides a full-featured MLOps platform covering experiment tracking, a model registry, and automated deployment pipelines — reducing time-to-production by up to 10x. GPU and compute management capabilities support large-scale LLM training via Ray and serving through KServe/vLLM. A standout capability is Sovereign AI support: Hopsworks can run fully air-gapped, on-premises, or in hybrid configurations, giving regulated industries like financial services and government full control over data residency and AI operations. Hopsworks is well-suited for data scientists, ML engineers, and enterprise teams building fraud detection, real-time recommendation engines, credit decisioning, RAG pipelines, and LLM-powered applications. A free SaaS tier and pay-as-you-go pricing make it accessible to teams at any stage.

Key Features

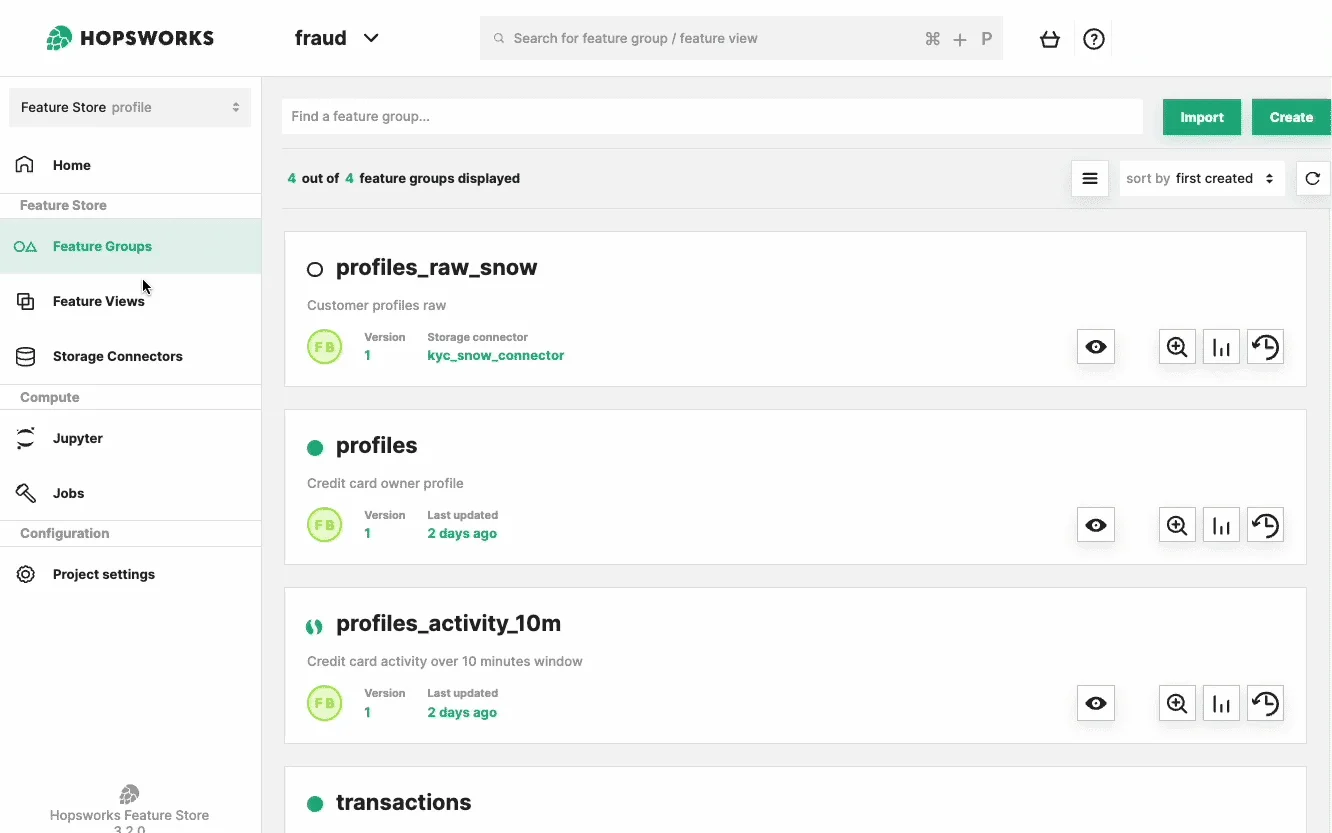

- Sub-Millisecond Feature Store: Centralized repository for ML feature data powered by RonDB, delivering <1ms retrieval latency — 10x faster than SageMaker and Vertex AI per SIGMOD 2024 benchmarks.

- Open-Format AI Lakehouse: Work directly with Apache Iceberg, Delta Lake, and Apache Hudi tables using a Python-native query engine — no data migrations required and 9–45x faster reads than Databricks or SageMaker.

- End-to-End MLOps Platform: Unified lifecycle management covering experiment tracking, model registry, and deployment pipelines, reducing time from experiment to production by up to 10x.

- GPU & LLM Compute Management: Smart GPU scheduling and quota management for training large models at scale with Ray, and serving via KServe or vLLM for high-throughput inference.

- Sovereign AI Deployment: Supports fully air-gapped, on-premises, and hybrid deployments, enabling regulated industries to maintain full data residency and AI operational control.

Use Cases

- Real-time credit card fraud detection using sub-millisecond online feature retrieval for instant transaction scoring.

- Personalized product recommendations at scale, as demonstrated by Zalando serving 50 million customers across 25 countries.

- Micro-lease and credit decisioning automation, replacing fragmented microservice pipelines with a unified ML platform.

- RAG (Retrieval-Augmented Generation) pipelines for LLM applications, with integrated embedding management and feature store support.

- Batch ML systems for churn prediction and customer lifetime value modeling using shared, reusable feature pipelines.

Pros

- Industry-Leading Feature Retrieval Latency: Sub-millisecond online feature serving powered by RonDB gives real-time ML applications a significant performance edge over cloud-native alternatives.

- No Vendor Lock-In: Native support for open table formats (Iceberg, Delta, Hudi) and frameworks (Spark, Flink, Pandas, DuckDB) means teams can migrate or extend without costly refactoring.

- 80% Cost Reduction Through Feature Reuse: Shared feature definitions across models eliminate redundant computation, dramatically lowering infrastructure costs as ML adoption scales.

- Flexible Deployment Options: From SaaS public preview to on-premises and air-gapped sovereign deployments, Hopsworks fits a wide range of organizational security and compliance requirements.

Cons

- Steep Learning Curve for New Teams: The breadth of the platform — feature store, lakehouse, MLOps, and GPU management — can be overwhelming for teams without prior MLOps experience.

- Pricing Complexity at Scale: Pay-as-you-go pricing can become difficult to predict for large-scale production workloads, particularly with high-throughput real-time feature serving.

- Enterprise Focus May Overwhelm Small Projects: The platform is optimized for large-scale production ML systems, which may be more infrastructure than small or experimental projects require.

Frequently Asked Questions

A Feature Store is a centralized data repository for ML features — the transformed, curated data inputs that models learn from. It ensures feature consistency between training and serving (eliminating training-production skew), enables reuse across models, and provides fast retrieval for real-time inference. Hopsworks' feature store supports both batch and sub-millisecond online access.

Hopsworks offers 9–45x faster reads than Databricks or SageMaker for lakehouse queries, and 10x lower online feature serving latency than SageMaker per SIGMOD 2024 benchmarks. It also supports open table formats natively without proprietary migrations, and provides built-in feature reuse capabilities neither competitor matches out of the box.

Yes. Hopsworks supports sovereign AI deployments including air-gapped on-premises installations, hybrid configurations, and region-locked cloud environments. This makes it suitable for government, defense, and financial services organizations with strict data residency requirements.

Hopsworks integrates with a wide range of frameworks including Spark, Flink, Pandas, and DuckDB for feature engineering; Ray for distributed training; and KServe and vLLM for model serving. It also supports RAG pipelines and embedding management for LLM applications.

Yes. Hopsworks offers a free SaaS public preview tier that allows teams to get started without upfront cost. Paid plans follow a pay-as-you-go model, and enterprise pricing is available for larger deployments requiring dedicated infrastructure or sovereign AI capabilities.