About

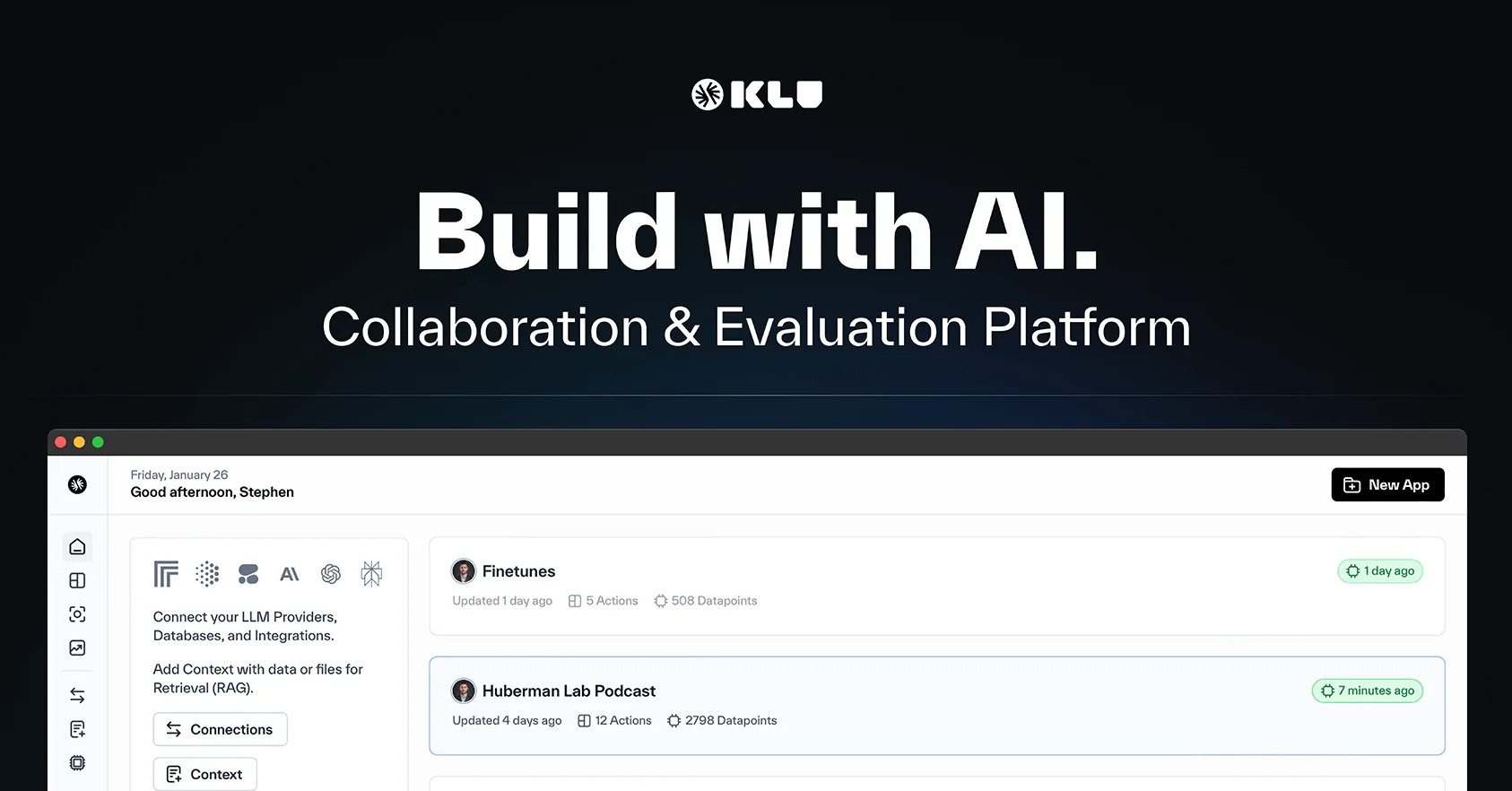

Klu AI is an end-to-end platform for building, deploying, and optimizing large language model applications at scale. It brings together collaborative prompt design, model observability, and evaluation tooling so that cross-functional AI teams can iterate quickly without losing alignment on quality. At the core is Klu Studio, a shared workspace where teams can build, version, and evaluate prompts together with built-in approval workflows. The Observe module tracks performance, cost, and model drift across every experiment tied directly to production data, giving teams full visibility without stitching together multiple tools. Klu supports 50+ integrations with major model providers including OpenAI, Anthropic, and Google, enabling side-by-side model comparisons and cost tracking from a single dashboard. Evaluation cycles blend automated metrics with human feedback to measure output quality at speed. For enterprise teams, Klu offers private VPC deployments, permissioned workspaces, audit trails, and SSO — all backed by dedicated engineering support for mission-critical rollouts. Pricing starts with a free Starter plan for solo builders, scales to a $99/seat Team plan for collaborative product teams, and offers custom Enterprise contracts for regulated or high-scale deployments. Klu is ideal for ML platform engineers, product teams shipping LLM features weekly, and enterprise AI organizations that need governance and compliance controls.

Key Features

- Collaborative Prompt Studio: Build, iterate, and version prompts in a shared workspace with built-in evaluation workflows and team approval controls.

- Model Observability Dashboard: Track performance, cost, and model drift in real time across all experiments and production deployments from one unified view.

- Shared Evaluation Sets: Combine automated quality metrics with human feedback to compare models and measure output quality without slowing down iteration cycles.

- Multi-Provider Integrations: Connect to 50+ model providers including OpenAI, Anthropic, and Google, enabling side-by-side model comparisons in a single workspace.

- Enterprise-Grade Security & Governance: Deploy in your own VPC with permissioned workspaces, audit trails, evaluation policies, SSO, and dedicated engineering support.

Use Cases

- Product and engineering teams collaborating on prompt design and versioning for customer-facing LLM features

- ML platform teams tracking model performance, cost, and quality drift across multiple providers in production

- Enterprise AI organizations requiring private cloud deployment, SSO, and audit trails for regulated workloads

- Research teams running structured A/B experiments across multiple LLM providers to compare output quality

- Startups building production-grade AI applications who need observability and evaluation without multiple toolchains

Pros

- Unified Tooling for the Full LLM Lifecycle: Klu consolidates prompt design, evaluation, and observability into one platform, eliminating the need to stitch together multiple tools.

- Strong Collaboration Features: Shared workspaces, versioning, and evaluation dashboards keep product, engineering, and research teams aligned without extra coordination overhead.

- Free Tier Available: The Starter plan is free forever, making it accessible for solo builders and small teams experimenting with LLM workflows.

- Enterprise-Ready Infrastructure: Private cloud deployment, audit trails, and governance controls make Klu suitable for regulated industries and security-conscious organizations.

Cons

- Per-Seat Pricing Can Add Up: The Team plan at $99 per seat per month may become expensive for larger AI teams, especially when scaling across departments.

- Overkill for Simple Single-Model Projects: Teams with a single model provider and minimal collaboration needs may find Klu's full feature set more than they require.

- Enterprise Pricing Is Opaque: Custom Enterprise pricing requires contacting sales, making it difficult to budget or compare costs upfront without a conversation.

Frequently Asked Questions

Yes. Klu supports 50+ integrations across major model providers including OpenAI, Anthropic, and Google, allowing teams to connect and compare models from a single workspace.

Klu combines automated quality metrics with human feedback to evaluate model outputs. Shared evaluation sets let teams measure and track quality changes over time without sacrificing iteration speed.

Yes. Enterprise plans include private cloud deployment options, including running Klu in your own VPC with isolated data planes and custom deployment controls.

Start in Klu Studio to design and iterate on prompts, then connect the Observe module to monitor performance and costs in production as your app scales.

Yes. Klu's Enterprise plan includes permissioned workspaces, audit trails, evaluation policies, SSO, and dedicated engineering support to meet compliance and governance needs.