About

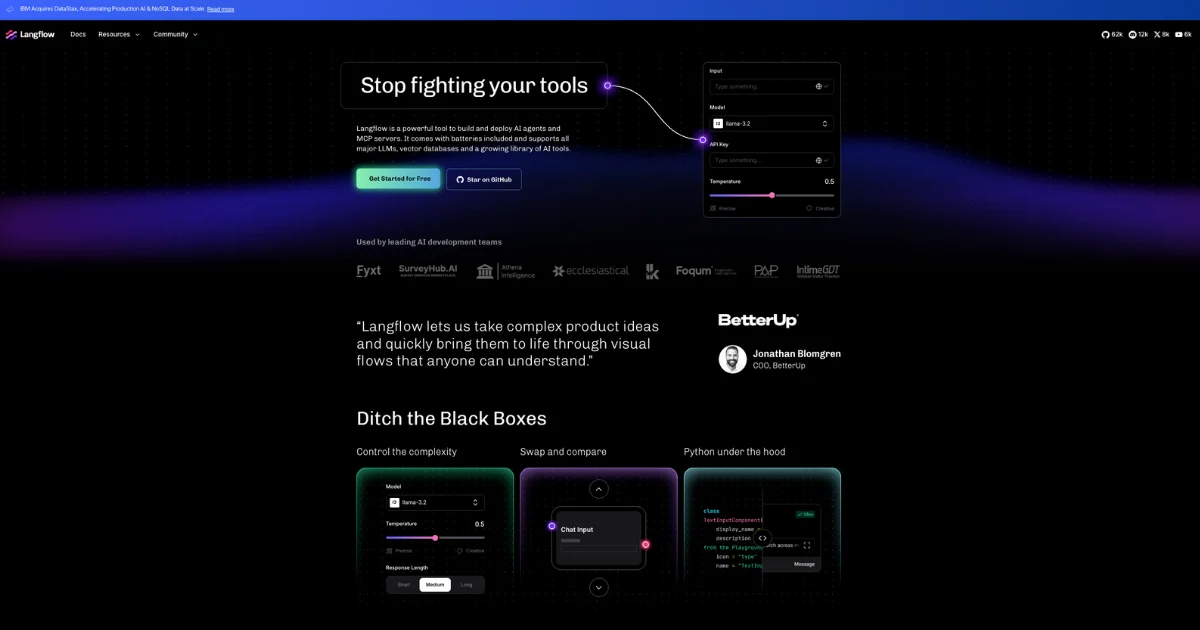

Langflow is an open-source, low-code AI development platform designed for building agentic workflows and RAG applications without fighting boilerplate code. Its intuitive drag-and-drop interface lets developers visually compose AI pipelines from reusable components, while Python runs under the hood for unlimited customization. Whether you're prototyping a single agent or orchestrating a fleet of agents, Langflow provides the building blocks to move from notebook to production rapidly. The platform supports all major LLMs — including OpenAI, Anthropic, Mistral, Meta LLaMA, Ollama, and more — as well as a wide range of vector databases such as Pinecone, Weaviate, Milvus, Qdrant, and Cassandra. Hundreds of pre-built flows and components covering data sources, models, tools, and integrations (Slack, Google Drive, GitHub, Notion, HuggingFace, and many others) accelerate development. Flows are instantly deployable as APIs, making it easy to expose AI functionality to production applications. Langflow also supports MCP (Model Context Protocol) servers, enabling powerful multi-agent architectures. Teams can collaborate on shared flows, compare model outputs, and iterate rapidly. It is available as an open-source self-hosted solution or as an enterprise-grade managed cloud, making it suitable for individual developers, startups, and large organizations alike.

Key Features

- Visual Flow Builder: Drag-and-drop interface to compose AI pipelines from reusable components, eliminating boilerplate and speeding up iteration.

- Universal LLM & Vector DB Support: Connect to all major LLMs (OpenAI, Anthropic, Mistral, LLaMA, Ollama) and vector databases (Pinecone, Weaviate, Milvus, Qdrant) out of the box.

- Python Customization: Every component is backed by Python, allowing developers to customize logic, write custom nodes, and extend the platform without limits.

- Agent & MCP Server Orchestration: Build single agents or entire fleets, and deploy MCP servers with all your components available as tools for multi-agent architectures.

- One-Click API Deployment: Expose any flow as a production-ready API instantly, with free cloud hosting or self-hosted enterprise-grade infrastructure options.

Use Cases

- Building and deploying RAG pipelines that connect LLMs to custom knowledge bases for enterprise Q&A systems.

- Orchestrating multi-agent AI workflows where specialized agents collaborate on complex, multi-step tasks.

- Rapidly prototyping AI-powered chatbots or assistants with integrations to tools like Slack, Notion, or Google Drive.

- Exposing AI workflows as REST APIs to power production web or mobile applications.

- Experimenting with and benchmarking multiple LLMs side-by-side within the same visual pipeline.

Pros

- Broad Integration Ecosystem: Hundreds of pre-built connectors for data sources, models, vector stores, and SaaS tools drastically reduce integration time.

- Low-Code with Full Code Flexibility: The visual editor speeds up development for beginners and non-specialists, while Python access gives power users full control.

- Open Source & Cloud Options: Freely self-hostable under open-source license with an enterprise cloud option, offering flexibility for any team size or compliance need.

- Rapid Prototyping to Production: The same Langflow experience works in OSS and cloud, making the path from local prototype to scalable production deployment seamless.

Cons

- Learning Curve for Complex Flows: Advanced agentic pipelines and multi-agent orchestration can become complex to manage visually as workflows grow larger.

- Self-Hosting Requires Infrastructure Knowledge: Running Langflow on your own infrastructure demands DevOps expertise for scaling, security, and maintenance.

- Enterprise Features Behind Paywall: Advanced collaboration, SSO, and premium support are reserved for paid cloud or enterprise tiers, which may not suit all budgets.

Frequently Asked Questions

Langflow is a low-code, open-source platform for building AI agents and RAG (retrieval-augmented generation) applications using a visual drag-and-drop editor backed by Python.

Yes, Langflow is open source and free to self-host. It also offers a free cloud tier, with paid plans available for enterprise features and managed infrastructure.

Langflow supports all major LLMs including OpenAI, Anthropic, Mistral, Meta LLaMA, Groq, and Ollama, as well as vector databases such as Pinecone, Weaviate, Milvus, Qdrant, Cassandra, and more.

Yes. Flows can be deployed as APIs with one click. You can host on Langflow's enterprise-grade cloud or deploy to your own infrastructure using the open-source version.

No. The visual editor allows non-developers to build functional AI flows. However, Python knowledge unlocks full customization of components and logic for more advanced use cases.