About

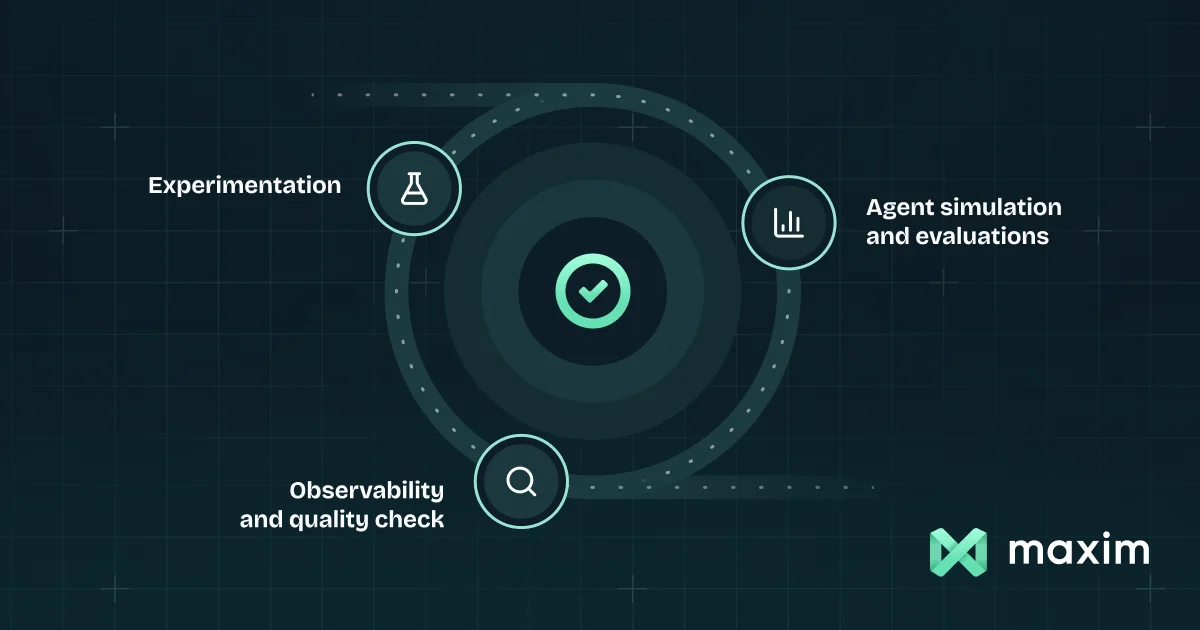

Maxim AI is a comprehensive evaluation and observability platform built for modern AI teams developing LLM-powered applications and autonomous agents. It streamlines the entire AI development lifecycle—from early prompt experimentation to production monitoring—enabling teams to ship reliable AI products up to five times faster. The platform's Experimentation module provides a Prompt IDE for iterating on prompts, models, tools, and context without code changes, along with prompt versioning, low-code prompt chains, and one-click deployment. Its Agent Simulation & Evaluation engine lets developers test agents at scale across thousands of diverse scenarios using both predefined and custom metrics, and integrates natively with CI/CD pipelines for automated quality gates. Maxim's Observability layer logs and visualizes complex multi-agentic traces, supports real-time online evaluations across generations, tool calls, and retrievals, and delivers proactive alerts on quality or safety regressions. A unified library of LLM-as-a-judge, statistical, programmatic, and human evaluators powers consistent scoring throughout. The platform also includes Bifrost, an LLM gateway that governs traffic across 1,000+ models at the organizational level. Maxim is framework-agnostic, with SDKs, CLI tools, and webhook support, and natively handles structured outputs, multimodal datasets, and runtime context sources. It is trusted by consulting firms, engineering teams, and AI-native companies seeking to move from reactive troubleshooting to proactive quality management.

Key Features

- Prompt IDE & Versioning: Iterate on prompts, models, tools, and context without code changes; version and deploy prompts outside the codebase with a single click.

- Agent Simulation & Evaluation: Test AI agents at scale across thousands of scenarios using AI-powered simulations and a suite of predefined and custom quality metrics.

- Real-Time Observability: Log and visualize multi-agentic traces, debug live issues, run online evaluations on real traffic, and set alerts for quality or safety regressions.

- Unified Evaluator Library: Access pre-built LLM-as-a-judge, statistical, programmatic, and human evaluators—or create fully custom scorers—within a single platform.

- Bifrost LLM Gateway: Govern and route AI traffic across 1,000+ models organization-wide with Maxim's high-performance LLM gateway.

Use Cases

- AI engineering teams iterating on agent prompts and evaluating model performance before deploying to production.

- Quality assurance workflows for LLM-powered products that require systematic regression testing and CI/CD integration.

- Enterprise organizations monitoring multi-agent systems in production for real-time quality, safety, and performance regressions.

- Data and ML teams benchmarking multiple LLMs against custom evaluation criteria to select the best model for a given task.

- Companies building RAG applications who need to evaluate retrieval quality, tool call accuracy, and end-to-end response quality at scale.

Pros

- End-to-end workflow coverage: Covers the full AI development lifecycle from prompt experimentation to production monitoring, eliminating the need for multiple disconnected tools.

- Framework-agnostic integration: Works with leading AI providers, frameworks, SDKs, CLI, and webhooks, making it easy to plug into any existing stack.

- CI/CD-native automation: Seamlessly integrates with CI/CD workflows for automated evaluation gates, enabling proactive quality management before deployments.

- Significant time-to-production gains: Customers report up to 75% reduction in time to production thanks to systematic testing and automated monitoring.

Cons

- Learning curve for full platform: With simulation, observability, evaluations, and gateway features all in one place, onboarding to the full platform can take time for smaller teams.

- Pricing transparency: Detailed pricing tiers are not prominently surfaced on the homepage, requiring teams to contact sales or sign up to understand enterprise costs.

- Primarily suited for agent-based use cases: Teams building simpler LLM integrations may find the depth of the platform more than they need for straightforward chatbot or completion tasks.

Frequently Asked Questions

Maxim supports a wide range of GenAI applications including conversational agents, multi-step agentic workflows, RAG pipelines, tool-calling agents, and standard LLM completions. It is framework-agnostic and works with all major AI providers.

Maxim provides SDKs, a CLI, and webhook support, and integrates natively with CI/CD workflows so evaluation and quality checks can be automated as part of every deployment process.

Bifrost is Maxim's high-performance LLM gateway that allows organizations to govern AI traffic, manage usage, and route requests across 1,000+ models from a single control plane.

Yes. Maxim supports custom evaluators alongside its pre-built library, including LLM-as-a-judge, statistical, programmatic, and human scoring approaches, so teams can measure quality metrics specific to their use case.

Yes, Maxim offers a free plan to get started. Teams can sign up and begin running evaluations and experiments without a credit card, with paid plans available for higher usage and enterprise needs.