About

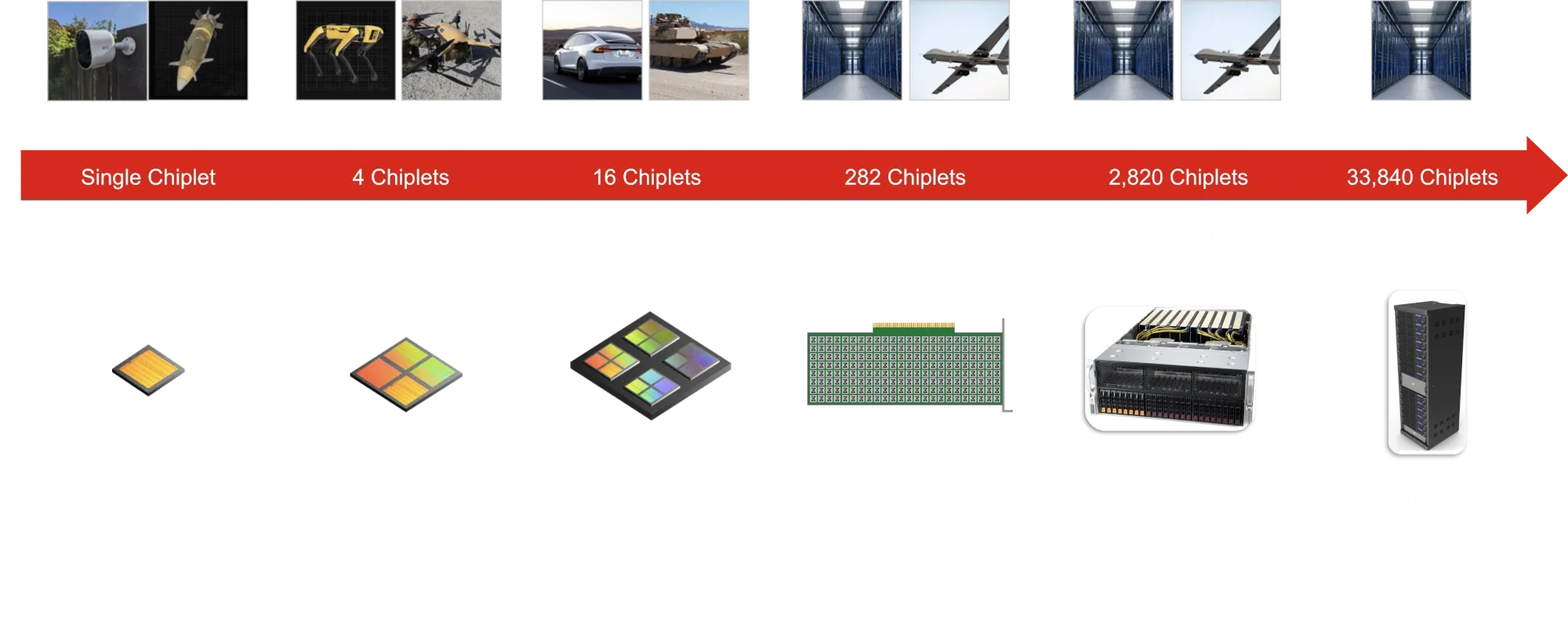

Mythic is a leading high-performance analog computing company pioneering a fundamentally new approach to AI inference hardware. At the core of their innovation are Analog Processing Units (APUs), which collapse compute and memory into a single plane—storing AI model weights directly in the processor rather than shuttling them back and forth between separate memory chips. This eliminates the memory bottleneck that plagues traditional digital architectures and results in order-of-magnitude improvements across three critical dimensions: energy efficiency (100x lower power consumption), cost (100x more affordable), and performance scalability (100x faster throughput). Mythic's technology is purpose-built for the demands of modern AI inference workloads—from running large language models like Llama 3 70B at over 10,000 tokens/sec/user in data centers, to enabling smarter autonomous vehicles, fully automated robotics in factories and farms, and advanced defense sensing and planning systems. The company has secured strategic partnerships including a joint chip development agreement with Honda for next-generation vehicles, and recently completed an oversubscribed $125M fundraising round. Led by NVIDIA veteran CEO Dr. Taner Ozcelik, Mythic is positioned to challenge GPU dominance in AI computing by offering enterprises a radically more efficient path to deploying AI at scale—both at the edge and in the cloud.

Key Features

- Analog Compute-in-Memory Architecture: Stores AI model parameters directly inside the processor, eliminating the energy and latency costs of moving data between separate memory and compute units.

- 100x Energy Efficiency vs. GPUs: Mythic APUs consume dramatically less power than industry-standard GPUs, making large-scale and edge AI deployments economically and environmentally viable.

- High-Throughput LLM Inference: Capable of serving over 10,000 tokens/sec/user for models like Llama 3 70B while reducing total cost of ownership by over 100x compared to GPU-based infrastructure.

- Multi-Market Edge AI Deployment: Purpose-designed for diverse real-world applications including automotive ADAS, industrial robotics, smart cities, defense systems, and consumer AR/VR devices.

- Dataflow Architecture for AI Workloads: Custom dataflow architecture optimized for neural network inference, enabling scalable performance across both compact edge devices and large data center deployments.

Use Cases

- Deploying energy-efficient LLM inference servers in data centers to dramatically reduce electricity costs and TCO compared to GPU clusters.

- Powering next-generation ADAS and autonomous driving systems in vehicles with 100x more AI performance within strict automotive power budgets.

- Enabling fully automated robotic systems in factories and farms with on-device intelligence that doesn't rely on cloud connectivity.

- Equipping defense platforms with real-time, low-power threat detection and mission planning AI in size- and weight-constrained environments.

- Running smart city and AR/VR edge AI workloads locally on devices, reducing latency and bandwidth requirements while improving user privacy.

Pros

- Massive Energy and Cost Savings: The analog compute-in-memory approach delivers up to 100x reductions in power consumption and cost compared to conventional GPU solutions, making AI inference far more sustainable at scale.

- Purpose-Built for AI Inference: Unlike general-purpose GPUs adapted for AI, Mythic APUs are architecturally optimized specifically for inference workloads, yielding superior performance-per-watt.

- Strong Industry Partnerships: Strategic collaborations with major players like Honda, combined with a $125M raise, signal strong enterprise validation and a clear path to broad deployment.

- Versatile Deployment Across Markets: A single technology platform addresses use cases from tiny edge sensors to full data-center racks, reducing the need for multiple vendor solutions.

Cons

- Early-Stage Commercial Availability: As an emerging hardware company, Mythic's chips and developer ecosystem may not yet match the maturity, tooling, and software support of established GPU vendors like NVIDIA.

- Analog Sensitivity Challenges: Analog computing can be susceptible to noise, temperature variation, and manufacturing tolerances, which may require additional calibration and validation in sensitive deployment environments.

- Niche Ecosystem: Developers and enterprises accustomed to GPU-centric frameworks (CUDA, PyTorch GPU backends) may face a learning curve and toolchain migration when adopting Mythic's platform.

Frequently Asked Questions

Mythic's Analog Processing Units store AI model weights directly inside the processor using compute-in-memory technology. Traditional digital GPUs store parameters in separate DRAM, requiring constant high-energy data transfers. By eliminating this memory bottleneck, Mythic achieves up to 100x better energy efficiency and performance per watt.

Mythic targets a wide range of AI inference use cases including data center LLM serving, automotive ADAS and sensor fusion, industrial and agricultural robotics, smart city infrastructure, drone and aerospace systems, and defense sensing and planning applications.

Yes. Mythic's data center solutions are capable of serving over 10,000 tokens/sec/user for Llama 3 70B while reducing total cost of ownership by over 100x compared to conventional GPU-based deployments.

Mythic has announced a joint chip development agreement with Honda for next-generation automotive AI, and recently closed an oversubscribed $125M funding round. The company is led by Dr. Taner Ozcelik, a veteran from NVIDIA.

Mythic is actively commercializing its APU technology and engaging with enterprise customers across automotive, defense, and data center markets. Interested parties should contact Mythic directly through their website for product availability, sampling, and partnership inquiries.