About

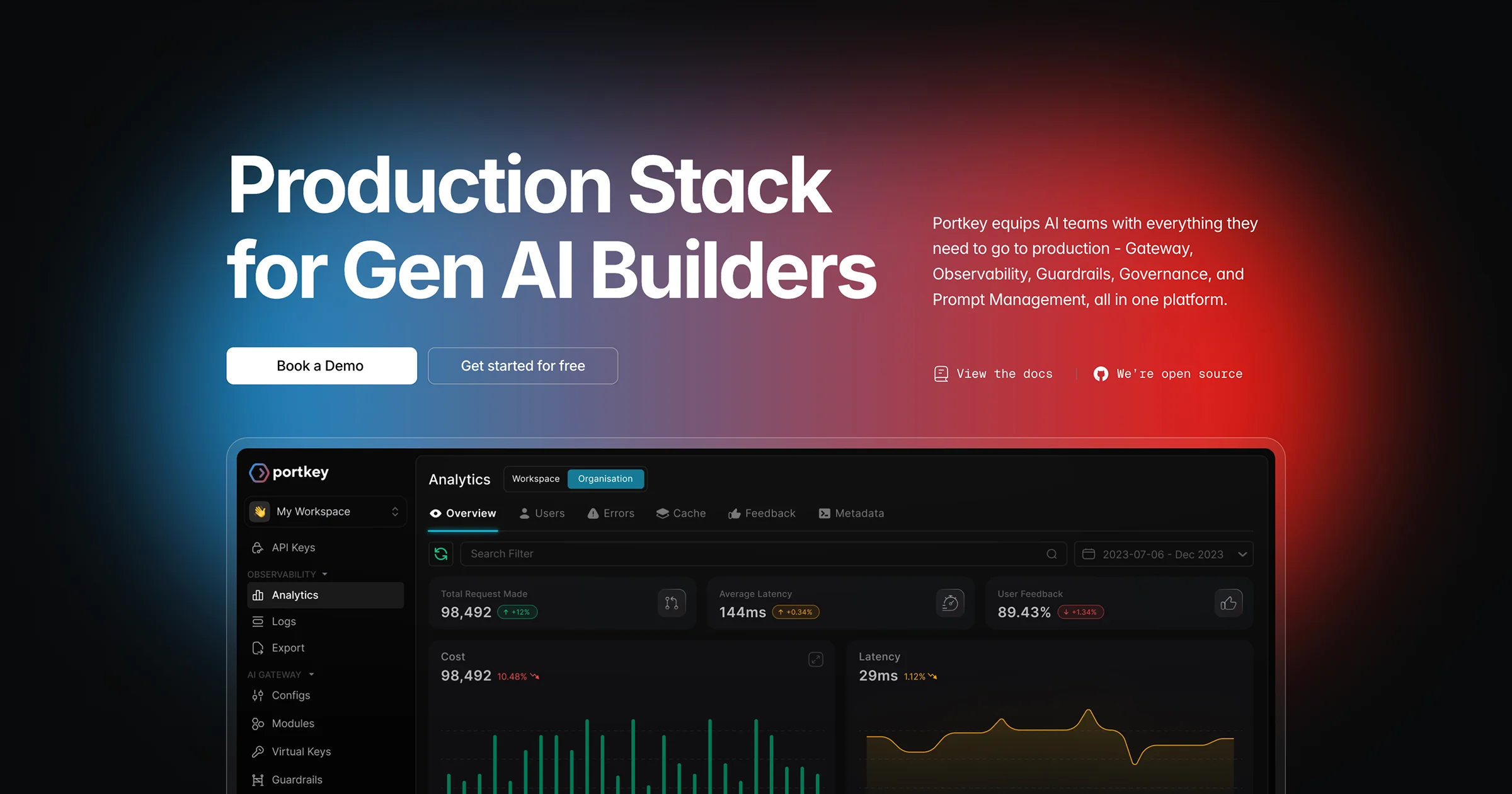

Portkey is a comprehensive production stack built for AI engineering teams who need to ship reliable, cost-effective LLM-powered applications at scale. At its core is the AI Gateway, which provides a single, unified API endpoint to access more than 1,600 large language models — eliminating the integration overhead of managing multiple provider SDKs. Beyond routing, Portkey layers in a full suite of production essentials: real-time observability dashboards to monitor LLM behavior and catch anomalies early; configurable guardrails to keep AI outputs safe and on-policy; governance controls to manage access, budgets, and usage across teams; and a visual prompt management module for versioning and testing prompts. Intelligent caching significantly reduces redundant API calls, delivering measurable cost savings — users report saving thousands of dollars on repeated LLM calls. Portkey is open source, offering transparency and extensibility for teams that want to self-host or contribute. It integrates seamlessly into existing workflows with drop-in compatibility for the OpenAI SDK, making adoption straightforward. Whether you are a startup experimenting with AI or an enterprise running billions of tokens daily, Portkey provides the reliability, visibility, and control needed to move confidently from prototype to production.

Key Features

- Unified AI Gateway: Access 1,600+ LLMs from a single API endpoint, removing the need to manage multiple provider integrations and SDKs.

- Real-Time Observability: Monitor LLM behavior, track token usage, catch anomalies early, and manage costs proactively through a live dashboard.

- Guardrails & Governance: Enforce output safety policies, manage team-level access controls, and set budget limits to keep AI deployments compliant and on-policy.

- Intelligent Caching: Cache repeated LLM responses to dramatically reduce API costs and latency, with users reporting thousands of dollars saved.

- Prompt Management: Version, test, and collaborate on prompts visually without code deployments, keeping prompt iteration fast and organized.

Use Cases

- Enterprise AI teams managing multiple LLM providers who need a unified gateway to simplify integrations, control costs, and enforce usage policies across departments.

- AI startups building LLM-powered products who need production-grade observability and guardrails to monitor and safeguard model outputs before launch.

- DevOps and ML engineering teams embedding LLM calls in CI/CD pipelines who want to use caching to avoid repeated API costs on unchanged test suites.

- Platform teams setting up internal AI governance, defining budget limits and access controls so different business units can use AI safely and within approved boundaries.

- Prompt engineers and product teams who need a visual prompt management system to version, A/B test, and iterate on prompts without requiring code deployments.

Pros

- Massive Model Coverage: Supports 1,600+ LLMs through one unified API, giving teams the flexibility to swap or combine models without re-engineering integrations.

- Open Source & Transparent: The core gateway is open source, allowing teams to audit, self-host, or extend the platform to fit their security and compliance requirements.

- Proven Cost Savings: Built-in caching and usage monitoring help teams cut LLM spending significantly, with a clear and demonstrable ROI from day one.

- Drop-In Compatibility: Works as a drop-in replacement for the OpenAI SDK, making adoption fast with minimal code changes for existing projects.

Cons

- Complexity for Simple Use Cases: Teams with a single LLM provider and basic needs may find Portkey's full feature set more than necessary for their scale.

- Self-Hosting Requires DevOps Effort: While open source, running a self-hosted instance at scale demands infrastructure expertise and ongoing maintenance.

- Pricing Scales With Usage: Higher token volumes and advanced enterprise features may push costs up, requiring careful planning for large-scale deployments.

Frequently Asked Questions

Portkey AI Gateway is an open-source AI infrastructure platform that provides a unified API to access 1,600+ LLMs, alongside observability, guardrails, prompt management, and governance tools for production AI applications.

Yes, Portkey is open source. The core AI gateway is available on GitHub, and teams can self-host it or use the managed cloud version.

Portkey includes intelligent caching that stores and reuses identical or similar LLM responses, preventing redundant API calls and significantly reducing token spend — particularly valuable for testing and CI/CD workflows.

Portkey supports 1,600+ models across all major providers including OpenAI, Anthropic, Google, Mistral, Cohere, and many more, accessible through a single unified API.

Very straightforward — Portkey is drop-in compatible with the OpenAI SDK. You typically just change your base URL and API key, and your existing code starts routing through Portkey with minimal modifications.