About

SaladCloud democratizes AI compute by harnessing an idle global network of 450,000+ consumer NVIDIA RTX/GTX GPUs, making high-scale inference, batch processing, and model deployment dramatically cheaper than AWS, GCP, or Azure. With over 60,000 daily active GPUs and prices starting at just $0.02/hour, teams can run production AI workloads at a fraction of conventional cloud costs. The platform offers several tiers: the Salad Container Engine for fully managed, massively scalable deployments; Community Cloud for flexible, cost-effective GPU access; and Secure Cloud for enterprise-grade workloads. The Salad Gateway Service provides dedicated proxies in ~200 countries for data collection and residential IP tasks. An out-of-the-box Salad Transcription API delivers fast, affordable speech-to-text at scale. SaladCloud is purpose-built for AI/ML use cases including image generation, voice AI, computer vision, ZK proofs, molecular dynamics simulations, and large batch jobs. Customers like Civitai report serving 10 million AI-generated images per day across 600+ consumer GPUs, while others have achieved 85% cost reductions compared to major hyperscalers. Developers can deploy via the SaladCloud Portal, CLI, SDKs, or GitHub integrations. Virtual Kubelet support enables Kubernetes-style pod deployments. A free trial is available, with volume discounts and committed contracts for high-usage teams.

Key Features

- Salad Container Engine: Fully managed, massively scalable container deployment across thousands of distributed GPUs with simple configuration.

- Multi-Tier GPU Access: Choose from Community Cloud (flexible, low-cost), Secure Cloud (enterprise datacenter-grade), or a dedicated gateway for maximum control.

- Salad Transcription API: Ready-to-use speech-to-text API powered by the distributed GPU network, enabling high-throughput, low-cost audio transcription.

- Global Node Network: 450,000+ worldwide earning nodes across 191 countries deliver massive parallel compute capacity and residential IP proxying via the Gateway Service.

- Kubernetes & SDK Integrations: Virtual Kubelet support for K8s-style pod deployments, plus GitHub SDKs and APIs for seamless CI/CD pipeline integration.

Use Cases

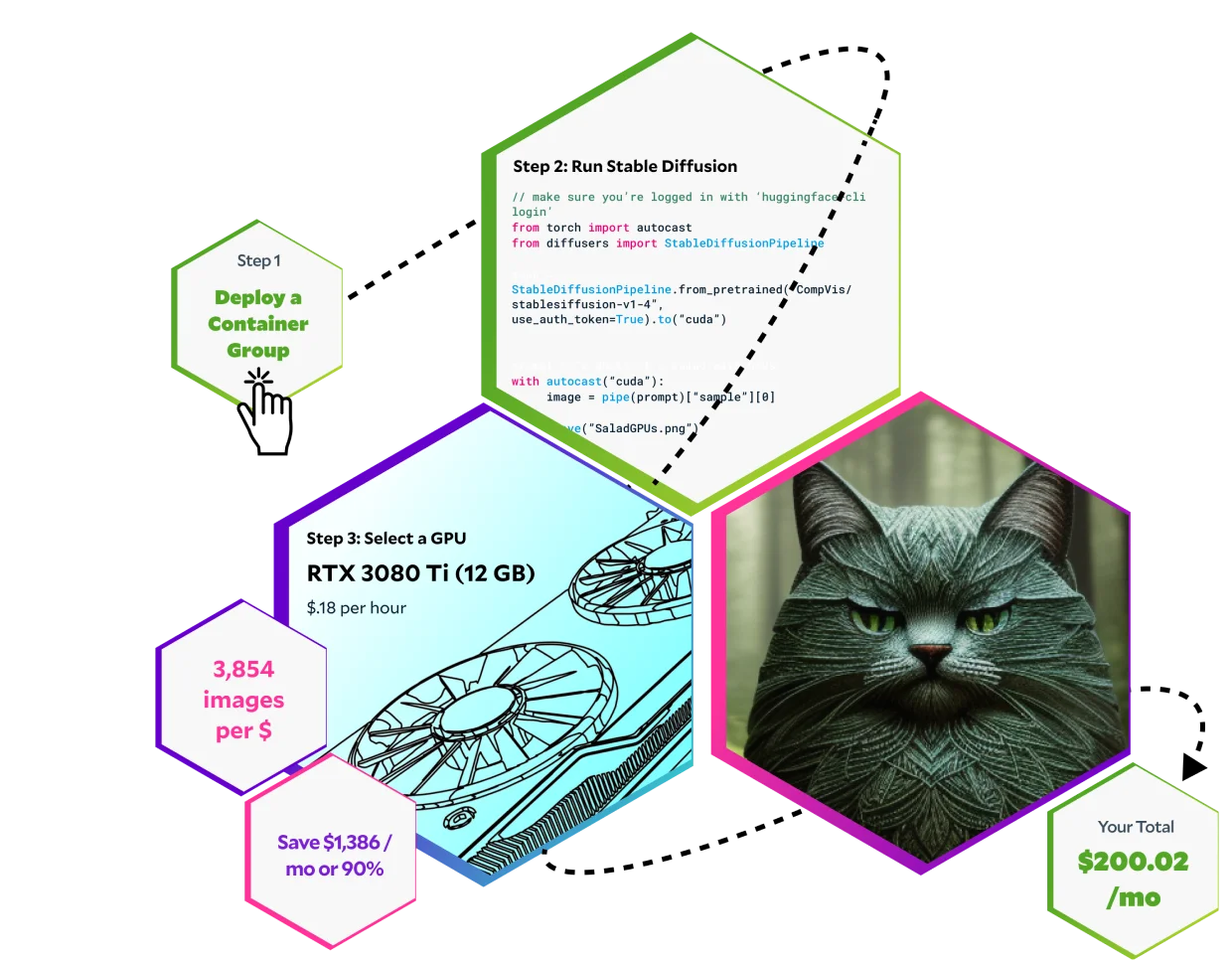

- Running large-scale AI image generation pipelines at up to 90% lower GPU costs than traditional cloud providers.

- Executing massive batch processing jobs — transcription, data transformation, or ML inference — with minimal spend.

- Deploying voice AI and speech-to-text workloads using the Salad Transcription API for high-throughput audio processing.

- Running computer vision models and ZK proof generation workloads across thousands of parallel distributed GPUs.

- Collecting web data via residential proxies in 200+ countries using the Salad Gateway Service for undetected, large-scale data gathering.

Pros

- Extreme Cost Savings: Up to 90% cheaper than AWS, GCP, or Azure — real customers report 85%+ reductions running production AI inference workloads.

- Massive Scale on Demand: Instantly access 60,000+ daily active GPUs without procurement delays, ideal for bursty batch jobs or rapid scaling events.

- Broad Use-Case Coverage: Supports image generation, voice AI, computer vision, ZK proofs, molecular dynamics, data collection, and more out of the box.

- Developer-Friendly Tooling: Comprehensive portal, docs, GitHub repos, SDKs, and a model recipe library reduce time-to-deploy significantly.

Cons

- Consumer-Grade GPUs Only: All nodes use NVIDIA RTX/GTX class GPUs; A100/H100 data-center cards are not available on the community cloud tier.

- Distributed Reliability Trade-offs: Consumer nodes can go offline unpredictably, requiring workloads to be designed for fault tolerance and retries.

- Enterprise Pricing Requires Sales Contact: Volume discounts and committed contracts are not self-serve; high-usage teams must go through a sales conversation.

Frequently Asked Questions

All GPUs on SaladCloud belong to the RTX/GTX class from NVIDIA, sourced from individual PC owners worldwide rather than data-center racks.

SaladCloud customers typically save 50–90% on GPU compute costs. Benchmarked case studies show savings of up to 85% vs. major hyperscalers for AI inference workloads.

Yes. Companies like Civitai run 10 million image generations per day on SaladCloud's consumer GPU fleet. Workloads should be designed to handle node interruptions gracefully.

Yes. SaladCloud supports Virtual Kubelets, allowing you to deploy Kubernetes pods as container deployments on the distributed network.

Yes, SaladCloud offers a free trial so you can test deployments before committing to paid usage. Enterprise and high-volume contracts are available via the Sales team.