About

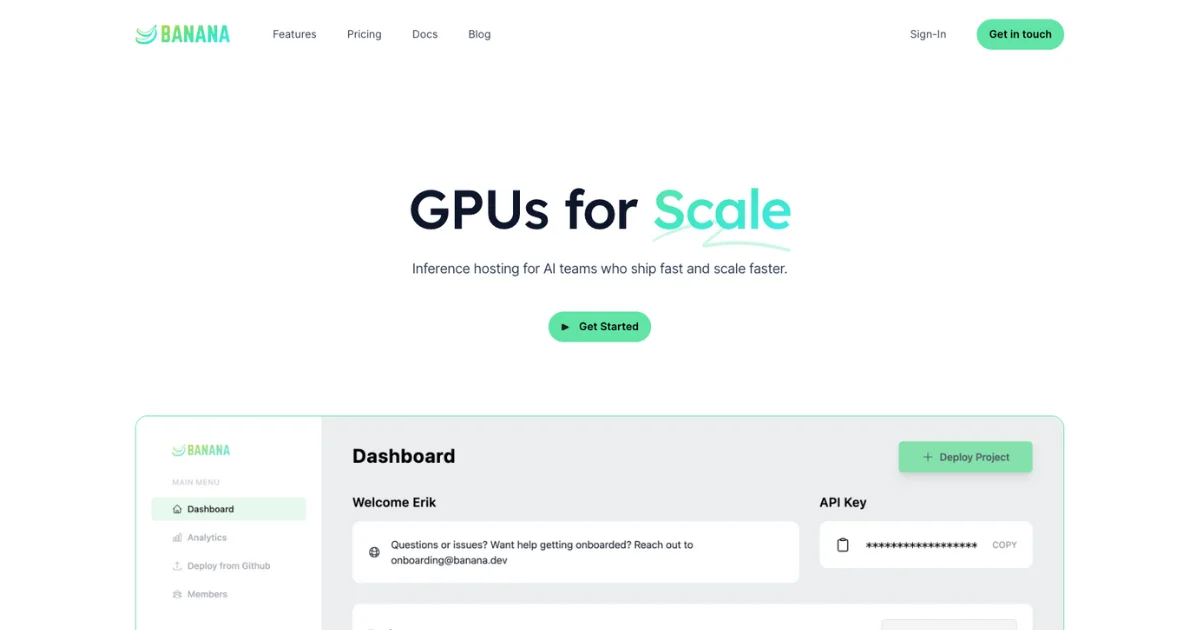

Banana is a high-throughput AI inference hosting platform designed for engineering teams that need to deploy and scale machine learning models without overpaying for compute. Unlike traditional serverless GPU providers that take significant margins on GPU time, Banana charges only a flat monthly rate plus at-cost compute with zero markup — making it substantially more economical at scale. The platform automatically scales GPU resources up and down based on real-time demand, keeping both costs and latency optimized without manual intervention. Banana ships with a comprehensive DevOps suite that includes GitHub integration, CI/CD pipelines, rolling deployments, CLI tooling, request tracing, and real-time logs — everything teams need to run production AI workloads without standing up separate infrastructure. Built-in observability tools surface request traffic, latency trends, and errors in real time, while business analytics track spend and endpoint usage over time. A full Automation API with SDKs and a CLI enable programmatic control over all deployments. The platform is powered by Potassium, an open-source HTTP framework designed specifically for AI inference workloads. It supports any model architecture and lets developers write inference backends in Python with minimal boilerplate. Banana targets AI startups and product teams looking for a scalable, affordable, developer-friendly alternative to general-purpose cloud GPU providers. Plans start at $1,200/month for small teams, with custom Enterprise tiers available.

Key Features

- Autoscaling GPUs: Automatically scales GPU capacity up and down based on demand, keeping costs low during idle periods and performance high under load.

- Pass-Through Pricing: Charges a flat monthly fee plus at-cost compute with zero markup, unlike most serverless GPU providers that take large margins on GPU time.

- Full DevOps Platform: Includes GitHub integration, CI/CD pipelines, CLI, rolling deployments, branch environments, request tracing, and real-time logs — all built in.

- Real-Time Observability & Analytics: Monitor request traffic, latency, and errors in real time; track spend and endpoint usage over time with built-in business analytics dashboards.

- Potassium Open-Source Framework: Powered by Potassium, an open-source HTTP framework purpose-built for AI inference that supports any model architecture with minimal Python boilerplate.

Use Cases

- Deploying large language models or diffusion models at production scale with automatic GPU autoscaling.

- Reducing inference infrastructure costs by eliminating GPU provider markup and paying only at-cost compute rates.

- Shipping production AI APIs rapidly using built-in CI/CD, GitHub integration, and rolling deployment pipelines.

- Monitoring AI inference performance in real time with built-in observability dashboards for latency, traffic, and errors.

- Automating multi-model deployment workflows programmatically via Banana's open Automation API, SDKs, and CLI.

Pros

- Zero Markup on Compute: At-cost GPU pricing delivers significant savings compared to traditional serverless GPU providers that charge substantial margins on compute.

- Batteries-Included DevOps: CI/CD, GitHub integration, CLI, rolling deploys, and observability are all built in, reducing the need for separate infrastructure tooling.

- Seamless Autoscaling: Handles variable inference workloads automatically, eliminating over-provisioning costs and manual capacity management.

Cons

- High Minimum Cost: The entry-level Team plan starts at $1,200/month, which is cost-prohibitive for individual developers or very early-stage projects.

- Platform Sunset: Banana announced a sunset of its services, raising concerns about long-term reliability, continued development, and vendor support.

Frequently Asked Questions

Banana charges a flat monthly fee plus at-cost compute with absolutely zero markup, while most serverless GPU providers take significant margins on GPU time. This makes Banana much more cost-effective at high inference volumes.

Potassium is Banana's open-source HTTP framework for writing AI inference backends. It handles model initialization and request routing, allowing developers to focus on model logic in Python with minimal setup.

Yes, the Team plan and above support custom GPU types, enabling teams to select the hardware best suited to their specific model's compute and memory requirements.

Banana includes GitHub integration, CI/CD pipelines, a CLI, rolling deployments, branch deployments, environment management, real-time tracing and logs, and a full Automation API with SDKs.

Banana offers a Team plan at $1,200/month plus at-cost compute (up to 10 team members, 5 projects, and 50 parallel GPUs) and a custom Enterprise plan with SAML SSO, higher parallel GPU limits, customizable inference queues, and dedicated support.