About

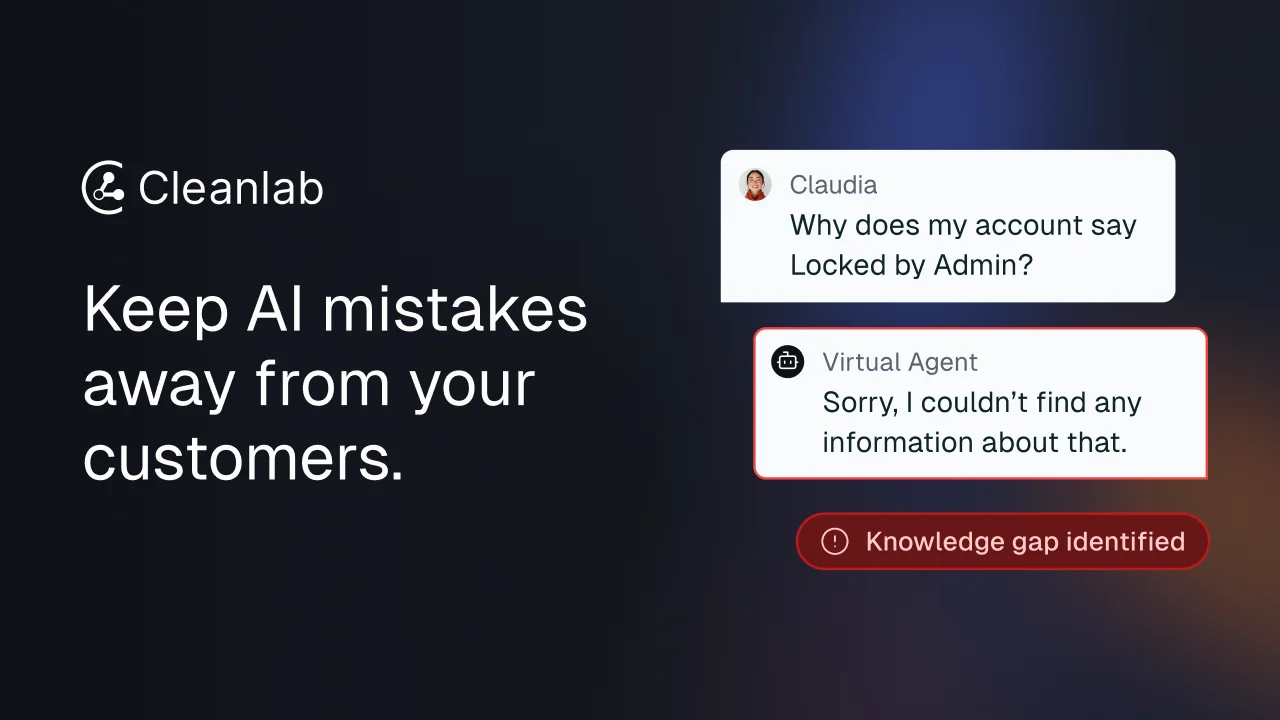

Cleanlab is a production AI reliability platform designed to make AI agents trustworthy at scale by catching mistakes before they reach end users. Originally spun out of MIT research, it has been acquired by Handshake AI and recognized among the top AI hallucination detection tools globally. The platform works as an independent safety layer that integrates with any existing AI system or knowledge base. Its two core capabilities are detection and remediation. On the detection side, Cleanlab applies real-time trust scores and configurable guardrails to automatically intercept hallucinations, retrieval errors, documentation gaps, policy violations, and malicious prompt attempts. On the remediation side, it provides a no-code human-in-the-loop workflow that empowers non-technical subject matter experts to correct AI responses, improve knowledge bases, and refine guardrails without engineering support. Cleanlab is purpose-built for high-stakes AI deployments such as customer support agents and internal employee-facing assistants. It enables smooth escalation from AI to human support, helps organizations transition safely from static to AI-powered responses, and automates safety by combining agents with human oversight. Deployment options include private VPC installation for full data control and secure managed SaaS for streamlined access. Ideal for startups and enterprises deploying LLMs in regulated or customer-facing environments where safety, compliance, and brand trust are non-negotiable.

Key Features

- Real-Time Hallucination Detection: Automatically identifies and blocks hallucinations, retrieval errors, documentation gaps, policy violations, and malicious prompts before responses reach end users.

- Trust Scores & Configurable Guardrails: Assigns real-time trust scores to every AI response and applies customizable guardrails to enforce your organization's safety and compliance standards.

- No-Code Human-in-the-Loop Remediation: Empowers non-technical subject matter experts to review flagged responses, correct answers, update knowledge base sources, and tune guardrails without writing code.

- Universal AI Stack Compatibility: Deploys as an independent layer on top of any AI system or knowledge base, integrating without requiring changes to your existing infrastructure.

- Flexible Deployment (VPC & SaaS): Supports private VPC installation for teams requiring full data sovereignty and a managed SaaS option for teams that prefer zero-infrastructure overhead.

Use Cases

- Customer support teams deploying AI agents that need reliable escalation to human agents when the AI is uncertain or produces an incorrect response.

- Enterprises building internal employee-facing AI assistants that must comply with company policies and consistently provide accurate, trustworthy information.

- Organizations in regulated industries such as healthcare, finance, or legal that must verify AI outputs for compliance before they surface to users.

- AI engineering teams looking to monitor and improve LLM-based pipelines in production without retraining models or overhauling existing infrastructure.

- Companies transitioning from static FAQ or knowledge base systems to AI-powered responses who need safety guardrails and oversight tooling during the rollout.

Pros

- Stack-Agnostic Integration: Works with any AI agent or knowledge base without requiring modifications to existing infrastructure, making adoption low-friction for engineering teams.

- Enables Non-Technical Teams to Improve AI: Subject matter experts can fix AI responses and refine guardrails independently, reducing the bottleneck on engineering and accelerating iteration cycles.

- Research-Backed Credibility: Founded on MIT research, recognized by the IJCAI-JAIR Best Paper Prize, and endorsed by AI leaders including Andrew Ng.

- Enterprise Deployment Flexibility: VPC and SaaS options make it suitable for regulated industries with strict data residency, security, and compliance requirements.

Cons

- No Transparent Self-Serve Pricing: Access requires booking a demo with no publicly listed plans or free tier, making it difficult for smaller teams to evaluate costs upfront.

- Potential Latency Overhead: Inserting a real-time detection middleware layer between AI and end users may introduce additional response latency depending on the deployment setup.

- Requires an Existing AI Pipeline: Cleanlab does not build or host AI models—it functions only as a safety layer on top of an existing LLM agent or knowledge base system.

Frequently Asked Questions

Cleanlab detects hallucinations, retrieval errors, documentation gaps, policy violations, and malicious use attempts, assigning real-time trust scores to flag problematic responses before they reach users.

No. Cleanlab deploys as an independent layer and integrates with any existing AI system or knowledge base without requiring modifications to your current infrastructure or models.

Cleanlab offers private VPC deployment, which keeps all data within your own cloud environment, and a managed SaaS option for teams that prefer not to manage infrastructure themselves.

Cleanlab is designed for startups and enterprises deploying AI agents in high-stakes environments—such as customer support and internal employee assistants—where accuracy, safety, and regulatory compliance are critical.

Cleanlab provides a human-in-the-loop interface that allows subject matter experts to review flagged AI responses, correct answers, update knowledge base sources, and adjust guardrails—all without writing any code.