About

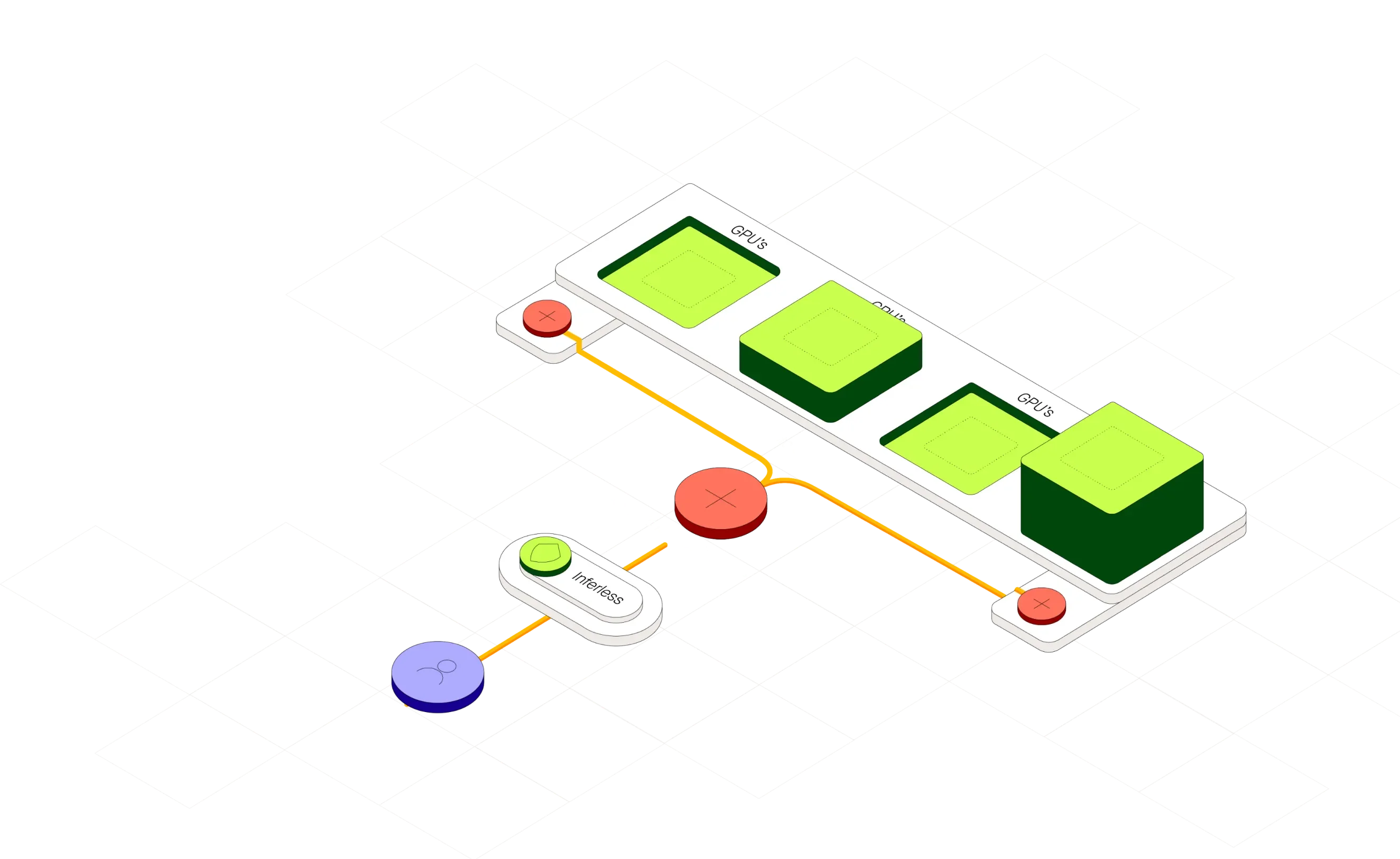

Inferless is a serverless GPU inference platform engineered for production-grade machine learning deployments. It allows developers and companies to go from model file to live API endpoint in minutes by supporting imports from Hugging Face, Git, Docker, or a CLI — with optional automated CI/CD rebuilds. The platform is purpose-built for spiky and unpredictable workloads, auto-scaling from zero to hundreds of GPUs with minimal cold-start latency, so teams pay only for what they use rather than provisioning idle capacity. Key capabilities include custom runtime containers for full dependency control, NFS-like writable volumes supporting simultaneous replica connections, server-side dynamic batching to maximize throughput, and private endpoints with configurable concurrency, timeouts, and webhook settings. Detailed call and build logs provide deep observability for model monitoring and iteration. Inferless is SOC-2 Type II certified and regularly penetration-tested, making it suitable for enterprise deployments. Customers report GPU cloud bill savings of up to 90% compared to traditional clusters, with same-day go-live timelines. It is ideal for ML engineers, AI startups, and enterprises running custom open-source models who need scalable, cost-efficient inference without the overhead of managing GPU infrastructure. Inferless has since joined Baseten, further strengthening its infrastructure capabilities.

Key Features

- Serverless GPU Auto-Scaling: Automatically scales from zero to hundreds of GPUs based on real-time demand, with no idle costs and minimal cold-start overhead.

- Multi-Source Model Deployment: Import models directly from Hugging Face, Git repositories, Docker images, or via CLI, with optional automated CI/CD rebuilds on code changes.

- Dynamic Batching: Server-side request combining increases throughput for high-QPS workloads without additional infrastructure changes.

- Custom Runtime & Volumes: Fully customizable containers for software dependencies and NFS-like writable volumes supporting simultaneous replica connections.

- Enterprise-Grade Security & Monitoring: SOC-2 Type II certified platform with penetration testing, detailed call/build logs, and private endpoint configuration.

Use Cases

- An AI startup deploying a custom embedding or fine-tuned LLM to production without building internal GPU infrastructure.

- An ML engineering team needing rapid iteration on model deployments with automated CI/CD rebuilds triggered by code changes.

- A company running computer vision or NLP models with unpredictable traffic spikes, leveraging auto-scaling to avoid over-provisioning.

- A researcher or indie developer hosting open-source models (e.g., from Hugging Face) via a cost-efficient pay-per-use API endpoint.

- An enterprise team replacing dedicated GPU clusters with serverless inference to reduce cloud costs while maintaining SOC-2 compliance.

Pros

- Massive Cost Savings: Users report up to 90% reduction in GPU cloud costs compared to traditional dedicated clusters, thanks to pay-per-use serverless billing.

- Rapid Deployment: Models can go from file to live endpoint in under a day, drastically reducing time-to-production for ML teams.

- Zero Infrastructure Management: No cluster provisioning, scaling configuration, or maintenance required — Inferless handles all GPU orchestration automatically.

- Production-Ready Security: SOC-2 Type II certification and regular vulnerability scans make it suitable for enterprise and regulated environments.

Cons

- Usage-Based Costs Can Be Unpredictable: While cost-efficient for variable workloads, high sustained traffic can lead to unpredictable billing compared to reserved instances.

- Cold Start Latency: Despite optimizations, scaling from zero may still introduce brief latency spikes for latency-sensitive applications at low traffic volumes.

- Vendor Lock-In Risk: Deep integration with Inferless's deployment and runtime model may require migration effort if switching providers in the future.

Frequently Asked Questions

Inferless supports any custom ML model built on open-source frameworks. You can deploy models from Hugging Face, Git repositories, Docker images, or directly via the CLI.

Inferless charges based on actual GPU compute hours used, not a flat monthly fee. When there are no requests, it scales to zero so you incur no idle costs.

Most models can go from import to live API endpoint within minutes. Customers report same-day production deployments even for complex custom models.

Yes. Inferless is SOC-2 Type II certified, undergoes regular penetration testing, and offers private endpoints, concurrency controls, and webhook configuration for enterprise workloads.

Dynamic batching combines multiple simultaneous inference requests server-side into a single batch, significantly increasing GPU throughput and reducing per-request cost at high query volumes.