About

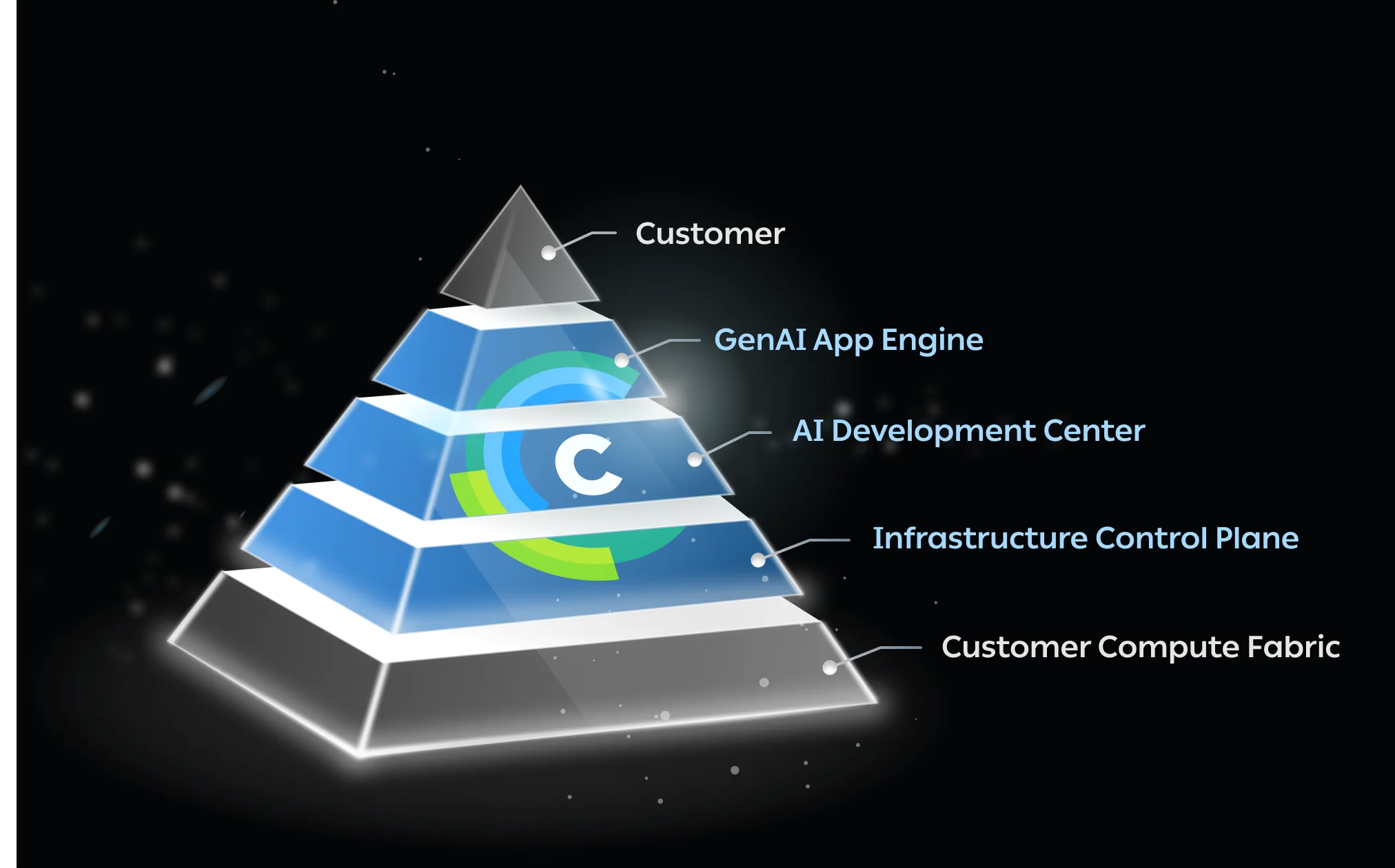

ClearML is a comprehensive AI infrastructure platform built for organizations that need to run AI workloads at enterprise scale. It operates as a three-layer solution: the Infrastructure Control Plane, the AI Development Center, and the GenAI App Engine. The Infrastructure Control Plane enables IT and ML Ops teams to connect and manage GPU clusters across on-premises, cloud, and hybrid environments, with priority-based job scheduling, dynamic fractional GPU allocation, and quota management to maximize compute utilization. The AI Development Center provides a full environment for developing, training, and evaluating AI models from anywhere. The GenAI App Engine simplifies LLM deployment onto managed clusters, handling networking, authentication, and security automatically — allowing teams to launch any GenAI workload with a single click. ClearML includes enterprise-grade security with multi-tenancy, role-based access control (RBAC), and granular billing capabilities. It is used by over 2,100 organizations globally, including research labs, financial services firms, defense contractors, and telecoms. Whether you're a data scientist training models, an MLOps engineer managing pipelines, or an IT administrator provisioning GPU resources, ClearML brings order and efficiency to the full AI production lifecycle.

Key Features

- Infrastructure Control Plane: Connect and manage GPU clusters across on-premises, cloud, and hybrid environments with priority-based scheduling, fractional GPU allocation, and quota management.

- AI Development Center: A robust, remotely accessible environment for developing, training, and testing AI models with full experiment tracking and collaboration tools.

- GenAI App Engine: Deploy LLMs onto your GPU clusters with a single click, with ClearML managing networking, authentication, load balancing, and security automatically.

- Enterprise Multi-Tenancy & RBAC: Secure multi-tenant architecture with isolated networks and storage per tenant, role-based access control, and granular billing for cost accountability.

- Compute Utilization Optimization: Maximize GPU ROI with dynamic resource sharing, workload scheduling across diverse cluster setups, and real-time utilization monitoring.

Use Cases

- Managing and optimizing GPU clusters across hybrid on-premises and cloud environments for large AI teams.

- Running and tracking ML training experiments at scale with centralized experiment management.

- Deploying and serving open-source or proprietary LLMs in production with managed infrastructure.

- Providing GPU-as-a-Service to multiple internal teams or external tenants with quota management and billing.

- Automating MLOps pipelines from development through testing to production deployment.

Pros

- All-in-One Platform: Covers the entire AI lifecycle — from infrastructure provisioning and model training to GenAI deployment — eliminating the need for multiple disparate tools.

- Flexible Deployment: Supports on-premises, cloud, and hybrid GPU environments, giving organizations full control over where and how their workloads run.

- Enterprise-Grade Security: Built-in multi-tenancy, RBAC, and isolated storage ensure compliance and data protection for large, multi-team organizations.

- One-Click GenAI Deployment: The GenAI App Engine dramatically reduces the complexity of deploying and serving LLMs, making production rollouts fast and repeatable.

Cons

- Steep Learning Curve: The platform's breadth and enterprise focus can be overwhelming for smaller teams or individual researchers just getting started.

- Primarily Enterprise-Focused: Many of its most powerful features — such as multi-tenancy and granular billing — are more valuable at scale, making it potentially over-engineered for smaller projects.

- Cost at Scale: Enterprise licensing and GPU management costs can be significant, and pricing transparency requires a demo/sales conversation for larger deployments.

Frequently Asked Questions

ClearML is an enterprise AI infrastructure platform that helps organizations manage GPU clusters, streamline ML development workflows, and deploy GenAI models. It consists of three core layers: the Infrastructure Control Plane, the AI Development Center, and the GenAI App Engine.

Yes. ClearML's Infrastructure Control Plane is designed to connect and manage GPU resources across on-premises, cloud, and hybrid environments, giving teams full flexibility in where they run their workloads.

The GenAI App Engine allows teams to deploy LLMs onto their managed GPU clusters with a single click. ClearML handles all the underlying complexity including networking, authentication, load balancing, and security, so teams can focus on their models rather than infrastructure.

ClearML offers a free tier to get started, with paid plans available for teams and enterprises that need advanced features like multi-tenancy, RBAC, granular billing, and dedicated support.

ClearML is built for enterprise AI teams including data scientists, ML engineers, MLOps professionals, and IT administrators across industries like research, financial services, defense, telecommunications, and the public sector.