About

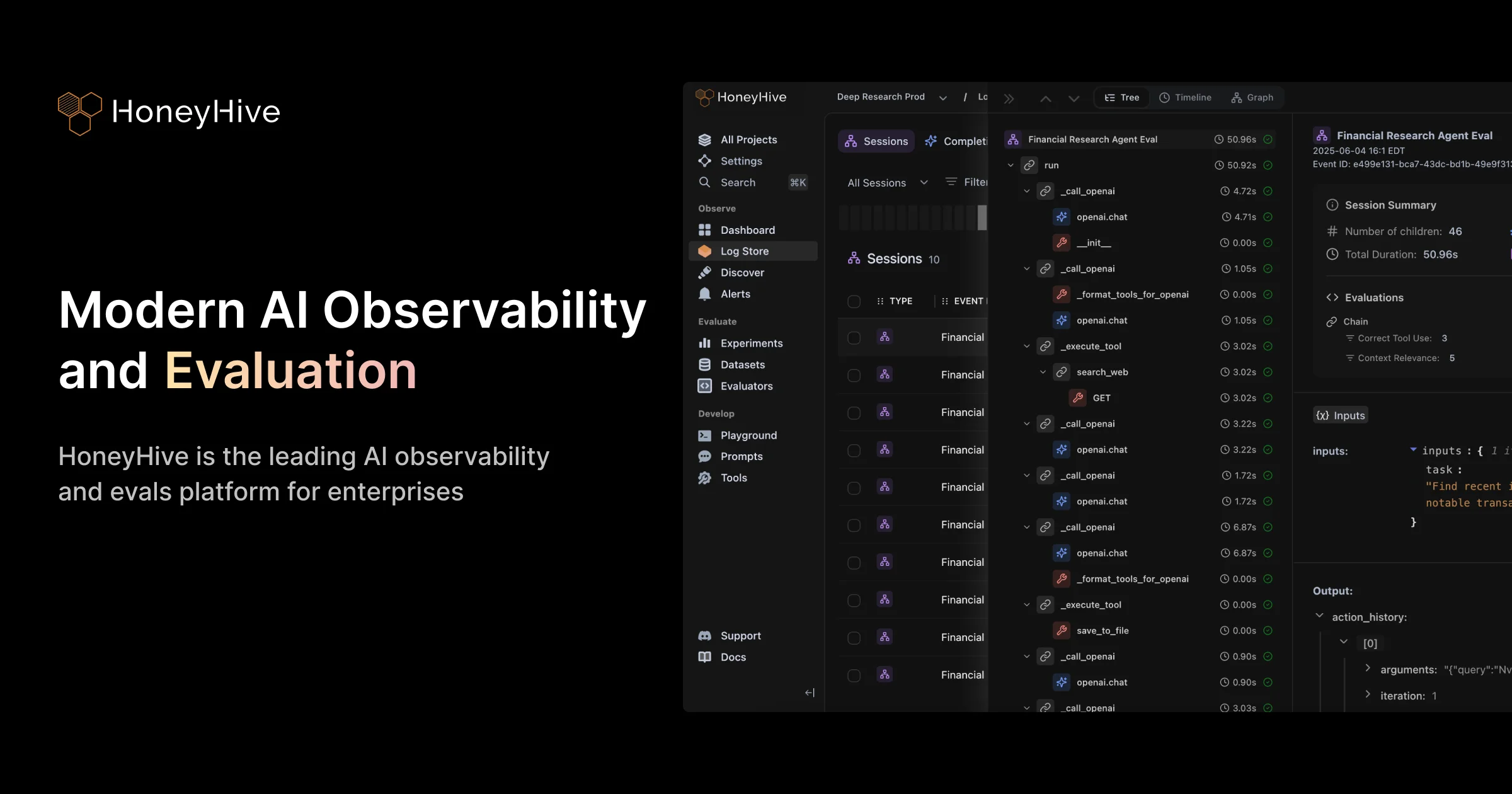

HoneyHive is a comprehensive AI observability and evaluation platform designed for teams building and operating AI agents in production. It covers every stage of the Agent Development Lifecycle (ADLC) — from debugging during development to continuous monitoring and regression detection after deployment. Its distributed tracing engine is OpenTelemetry-native and integrates with 100+ LLMs and agent frameworks, giving teams end-to-end visibility into complex multi-agent workflows through graph and timeline views, session replays, and powerful trace filtering. For quality assurance, HoneyHive supports offline experiments, CI/CD integration for automated test suites, and customizable LLM-as-a-judge or code-based evaluators. Teams can build and version datasets from production failures, run side-by-side comparisons of agent versions, and catch regressions before every release. Online evaluation and monitoring capabilities allow teams to track quality, latency, and cost on live traffic in real time, with drift detection and alerting for silent failures. Annotation queues route flagged traces to subject-matter experts for human review, with custom rubrics, audit trails, and dataset curation to continuously align evaluators with real-world business requirements. HoneyHive offers enterprise-grade security including SOC 2 Type II certification, GDPR and HIPAA compliance, fine-grained RBAC, SAML/SSO, and flexible deployment options from multi-tenant SaaS to full self-hosting. It is ideal for AI engineers, MLOps teams, and enterprise organizations that need trustworthy, production-ready AI agents.

Key Features

- Distributed Tracing: OpenTelemetry-native end-to-end tracing across 100+ LLMs and agent frameworks, with graph/timeline views, session replays, and trace filtering across millions of records.

- Online Monitoring & Alerts: Run live evaluations on production traffic to detect quality, safety, and reliability failures in real time, with drift detection and configurable alerting.

- Automated Experiments & CI/CD: Test agents offline against versioned datasets, compare workflows side-by-side, and integrate regression detection directly into CI/CD pipelines.

- Expert Annotation Queues: Route flagged traces to domain experts for human review with custom rubrics, audit trails, and feedback loops that align LLM evaluators with real business criteria.

- Flexible & Secure Deployment: Deploy as multi-tenant SaaS, single-tenant SaaS, hybrid, or fully self-hosted. SOC 2 Type II, GDPR, and HIPAA compliant with SAML/SSO and fine-grained RBAC.

Use Cases

- Debugging complex multi-agent AI workflows in production using distributed traces, session replays, and timeline views.

- Running automated regression tests against versioned datasets in CI/CD pipelines before shipping new agent versions.

- Continuously monitoring LLM agent quality, latency, and cost on live traffic with real-time drift detection and alerts.

- Routing edge-case traces to subject-matter experts for annotation and human review to improve evaluator alignment.

- Meeting enterprise compliance requirements for AI deployments in regulated industries such as banking, healthcare, and finance.

Pros

- Enterprise-Grade Security & Compliance: SOC 2 Type II certified, GDPR and HIPAA compliant, with SAML/SSO, RBAC, and full self-hosting options — meeting the strictest enterprise requirements.

- Broad Framework & Model Coverage: OpenTelemetry-native integration with 100+ LLMs and agent frameworks means teams can adopt HoneyHive without re-architecting their existing stack.

- Full ADLC Coverage: From development-time debugging and experiments to production monitoring and expert annotation, HoneyHive covers every phase of the agent development lifecycle in one platform.

- Human-in-the-Loop Workflows: Built-in annotation queues and expert review interfaces make it easy to incorporate domain expert feedback to continuously improve agent quality.

Cons

- Enterprise-Oriented Pricing: Full-featured plans with advanced security and compliance controls are geared toward large organizations, which may be cost-prohibitive for solo developers or small teams.

- Steep Learning Curve: The breadth of features — tracing, evals, experiments, annotations, monitoring — requires meaningful onboarding investment to fully operationalize.

- Self-Hosting Complexity: While self-hosting is available for maximum data control, it requires additional infrastructure management overhead compared to fully managed SaaS alternatives.

Frequently Asked Questions

HoneyHive is an AI observability and evaluation platform designed for engineering and MLOps teams building AI agents. It is particularly suited for enterprises that need to operate agents reliably in production, with customers ranging from AI startups to Fortune 500 companies.

HoneyHive is OpenTelemetry-native and supports 100+ LLMs and agent frameworks out of the box, making it compatible with virtually any modern AI stack without requiring major code changes.

HoneyHive supports both online evaluations (running live evals on production traffic) and offline experiments (testing against versioned datasets). Teams can write custom LLM-as-a-judge or code-based evaluators, and incorporate human expert review through annotation queues.

Yes. HoneyHive is SOC 2 Type II certified and compliant with GDPR and HIPAA. It also offers SAML/SSO, fine-grained RBAC, and flexible deployment options including full self-hosting for organizations with strict data residency requirements.

Yes. HoneyHive provides native CI/CD integration so teams can run automated evaluation test suites on every commit, enabling regression detection and quality gates before each release.