About

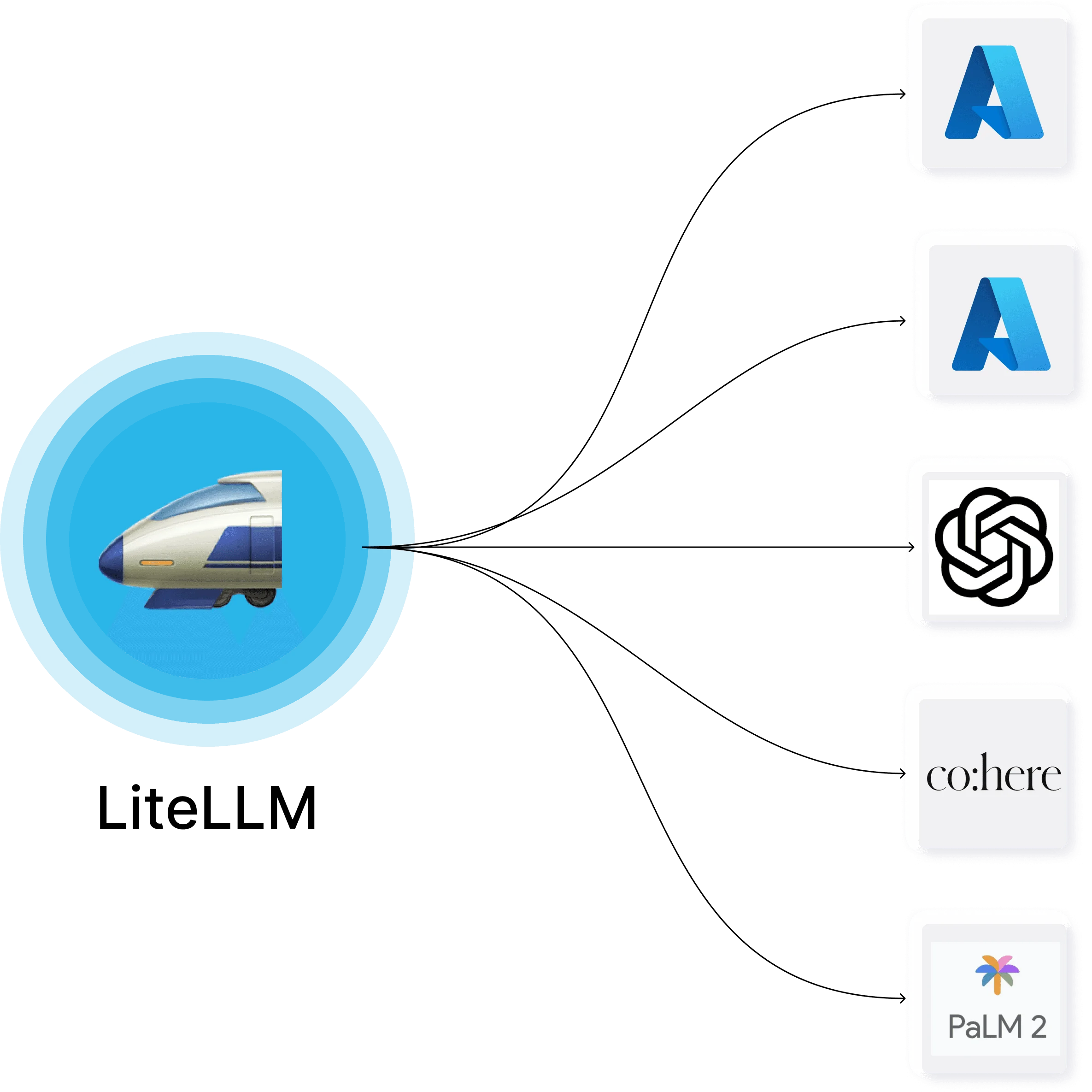

LiteLLM is an open-source LLM Gateway that simplifies how platform and infrastructure teams provide developers with access to large language models. Instead of integrating each provider separately, LiteLLM exposes a unified OpenAI-compatible API that proxies requests to over 100 LLM providers—including OpenAI, Azure OpenAI, Google Gemini, AWS Bedrock, and Anthropic—without requiring custom input/output transformations per provider. Key capabilities include granular spend tracking that attributes costs to keys, users, teams, or organizations; configurable budgets and rate limits (RPM/TPM); automatic LLM fallbacks to ensure high availability; virtual key management; and prompt management. LiteLLM also integrates with observability tools like Langfuse, Arize Phoenix, and LangSmith via OpenTelemetry, and supports logging to S3 and GCS. The open-source tier is free and covers all core features. An Enterprise tier adds SSO, JWT auth, audit logs, custom SLAs, and support for large-scale developer access programs. LiteLLM can be self-hosted on-premises or deployed in the cloud via Docker, making it suitable for organizations with strict data residency requirements. Ideal for AI platform teams, MLOps engineers, and enterprises that need to standardize LLM access, enforce governance policies, and track AI spend across multiple teams and projects. With 40K+ GitHub stars and 1,000+ contributors, it is one of the most widely adopted open-source LLM infrastructure tools available.

Key Features

- 100+ LLM Provider Support: Connect to OpenAI, Azure, AWS Bedrock, Google Gemini, Anthropic, and 95+ other providers through a single OpenAI-compatible API—no custom integration per provider needed.

- Spend Tracking & Budgets: Automatically track and attribute LLM costs to individual API keys, users, teams, or organizations. Set hard budget limits and receive alerts before thresholds are exceeded.

- Rate Limiting & LLM Fallbacks: Configure RPM/TPM rate limits per key or team, and define automatic fallback chains so requests reroute to backup models when a provider is unavailable or rate-limited.

- Virtual Key Management: Issue and revoke virtual API keys that abstract underlying provider credentials, enabling fine-grained access control without exposing raw provider secrets to developers.

- Observability & Logging: Integrates natively with Langfuse, Arize Phoenix, LangSmith, and OpenTelemetry for full LLM observability, with support for logging to S3, GCS, and other storage backends.

Use Cases

- Platform teams providing standardized, governed LLM access to internal developer teams across a large enterprise.

- MLOps engineers centralizing multi-provider LLM routing with automatic fallbacks to improve reliability and reduce downtime.

- Organizations tracking and attributing AI spend across business units, projects, or clients for cost control and chargeback.

- Startups building LLM-powered products who want to avoid vendor lock-in by abstracting provider-specific APIs behind a unified interface.

- AI infrastructure teams enforcing rate limits, budget caps, and access policies without exposing raw provider credentials to end users.

Pros

- Provider-Agnostic Unified Interface: A single OpenAI-format API eliminates the need to rewrite code when switching or adding LLM providers, saving significant engineering time.

- Battle-Tested at Scale: Used in production by companies like Netflix, with 1B+ requests served, 240M+ Docker pulls, and 1,000+ open-source contributors—a strong signal of reliability.

- Flexible Deployment: Fully self-hostable via Docker for on-prem or private cloud deployments, giving organizations complete control over data residency and network boundaries.

- Rich Ecosystem Integrations: Out-of-the-box integrations with popular observability, tracing, and logging tools mean teams can plug LiteLLM into existing MLOps stacks with minimal effort.

Cons

- Operational Overhead for Self-Hosting: Running LiteLLM in production requires managing your own infrastructure, including uptime, updates, and scaling—which adds DevOps burden for smaller teams.

- Enterprise Features Require Paid Tier: Advanced governance features like SSO, JWT auth, and audit logs are locked behind the Enterprise plan, which requires contacting sales for pricing.

- Primarily Developer-Focused: LiteLLM is designed for engineers and platform teams; non-technical stakeholders will find limited no-code or GUI-driven workflows out of the box.

Frequently Asked Questions

LiteLLM is an open-source AI Gateway (also called an LLM proxy) that provides a unified OpenAI-compatible API to route requests across 100+ LLM providers, with built-in spend tracking, rate limiting, and fallback management.

Yes. The open-source version is completely free and includes core features like multi-provider support, virtual keys, budgets, load balancing, and observability integrations. An Enterprise plan with additional governance features is available for larger organizations.

Yes. LiteLLM is designed to be deployed on-premises or in your own cloud environment via Docker. This is ideal for organizations with data residency, security, or compliance requirements.

LiteLLM supports 100+ providers including OpenAI, Azure OpenAI, AWS Bedrock, Google Gemini, Anthropic, Hugging Face, Cohere, Mistral, and many more—all accessible through one API.

LiteLLM automatically tracks spend per API key, user, team, or organization. Costs are computed based on actual token usage and can be logged to external systems like S3 or GCS for further analysis and chargeback workflows.